This excerpt is from the Rough Cuts version of the book and may not represent the final version of this material.

Upon completing this chapter, you will be able to understand the following:

- How the basic resources are added to the cloud

- How services are added to the cloud

- The creation and placement strategies used within a cloud

- How services are managed throughout their life cycle

This chapter provides a detailed overview of how an Infrastructure as a Service (IaaS) service is orchestrated and automated.

On-Boarding Resources: Building the Cloud

Previous chapters discussed how to classify an IT service and covered a little bit about how to place that service in the cloud using a number of business dimensions, criticality, roles, and so on. So you know how to choose the application and how to define the components that make up those applications, but how do you decide where in the cloud to actually place the application or workload for optimal performance? To understand this, you must look at the differences between how a consumer and a provider look at cloud resources.

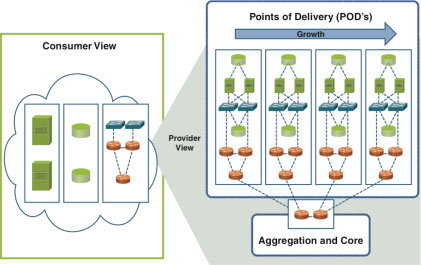

Figure 11-1 illustrates the differences between these two views. The consumer sees the cloud as a "limitless" container of compute, storage, and network resources. The provider, on the other hand, cannot provide limitless resources as this is simply not cost-effective nor possible. However, at the same time, the provider must be able to build out its infrastructure in a linear and consistent manner to meet demand and optimize the use of this infrastructure. This linear growth is archived through the use of Integrated Compute Stacks (ICS), which will often be referred to as a point of delivery (POD). A POD has been described previously. However, for the purposes of this section, consider a POD as a collection of compute, storage, and network resources that conform to a standard operating footprint that shares the same failure domain. In other words, if something catastrophic happens in a POD, workloads running in that POD are affected but neighboring workloads in a different POD are not.

Figure 11-1 Cloud Resources

When the provider initially builds the infrastructure to support the cloud, it will deploy an initial number of PODs to support the demand it expects to see and also lines up with the oversubscription ratios it wants to apply. These initial PODs, plus the aggregation and core, make up the initial cloud infrastructure. This initial infrastructure now needs to be modeled in the service inventory so that tenant services can be provisioned and activated; this process is known as on-boarding.

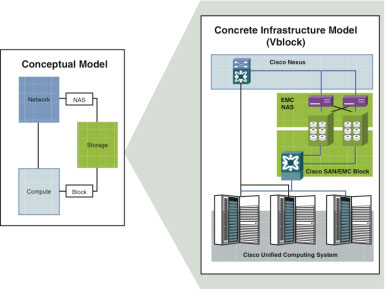

Clearly at the concrete level, what makes up a POD is determined by the individual provider. Most providers are looking at a POD comprised of an ICS that offers a pre-integrated set of compute, network, and storage equipment that operates as a single solution and is easier to buy and manage, offering Capital Expenditure (CAPEX) and Operational Expenditure (OPEX) savings. Cisco, for example, provides two examples of PODs, a Vblock1 and a FlexPod,2 which provide a scalable, prebuilt unit of infrastructure that can be deployed in a modular manner. The main difference between the Vblock and FlexPod is the choice of storage in the solution. In a Vblock, storage is provided by EMC, and in a FlexPod, storage is provided by NetApp. Despite the differences, the concept remains the same; provides an ICS that combines compute, network, and storage resources; and enables incremental scaling with predictable performance, capability, and facilities impact. The rest of this chapter assumes that the provider has made a choice to use Vblocks as its ICS. Figure 11-2 illustrates the relationship to the conceptual model and the concrete Vblock.

Figure 11-2 Physical Infrastructure Model

A Vblock can be specified by many different packages that provide different performance footprints. A generic Vblock for this example is comprised of

- Cisco Unified Computing System (UCS) compute

- Cisco MDS storage-area networking (SAN)

- EMC network-attached storage (NAS) and block storage

- VMware ESX Hypervisor

- Not strictly part of a Vblock, but the Cisco Nexus 7000 will be used as the aggregation layer and used to connect the NAS

A FlexPod offers a similar configuration but supports NetApp FAS storage arrays instead of EMC storage, and as it is typically NAS using Fibre Channel over Ethernet (FCoE) or Network File System (NFS); then, the SAN is no longer required. Also, note that the Vblock definition is owned by the VCE company (Cisco, EMC, and VMware coalition), so it will be aimed at VMware Hypervisor-based deployments, whereas FlexPod can be considered more hypervisor neutral.

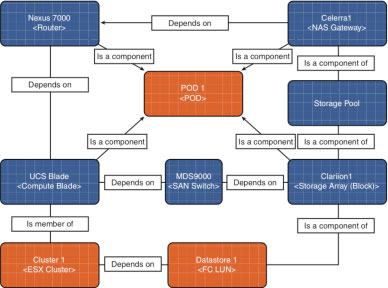

To deliver services on a Vblock, it first needs to be modeled in the service inventory or Configuration Management Database (CMDB), as the relationships between the physical building blocks will be relatively static, that is, cabling doesn’t tend to change on the fly; the CMDB is a suitable place to store this data. The first choices in terms of modeling are really driven by the data model supported by the repository that has been chosen to act as the system of record for the cloud. Most CMDBs come with a predefined model based around the Distributed Management Task Force (DMTF) Common Information Model (CIM) standard, and where possible, the classes and relationships provided in this model should be reused. For example, BMC, an ITSM3 company, provides the Atrium CMDB product, which implements the BMC_ComputerSystem class to represent all compute resources. An attribute of that class, isVirtual=true, is used to differentiate between physical compute resources and virtual ones without needing to support an additional class. Figure 11-3 illustrates a simple infrastructure model.

Figure 11-3 Infrastructure Logical Model

The following points provide a summary of the relationships shown in Figure 11-3:

- A compute blade instance depends on the router for connectivity and on the SAN switch for access block–based storage; a compute blade is also a member of an ESX cluster. The ESX cluster depends on a data store to access the storage array.

- The NAS gateway instance depends on the router for connectivity, and the storage pool is a component of the NAS gateway.

- The storage array instance depends on the SAN switch for access, and the storage pool is also a component of the NAS gateway.

- The POD acts as a container for all physical resources.

With this basic infrastructure model added to the CMDB or service inventory, you can begin to add the logical building blocks that support the service and any capabilities or constraints of the physical POD that will simplify the provisioning process. Does this seem like a lot of effort? Well, the reason for this on-boarding process is threefold:

- The technical building blocks discussed in the previous chapter need to exist on something physical, so it's important to understand that there is a relationship between physical building blocks and the building blocks that make up the technical service. Provisioning actions that modify or delete a technical service need to be able to determine which physical devices need to be modified so that they will consult the CMDB or service inventory for this information.

- The physical building blocks have a limit to how many concrete building blocks they can support. For example, the Nexus 7000 can only support a set number of VLANs, so these capacity limits should be modeled and tracked. (This is discussed in more detail in the next chapter.)

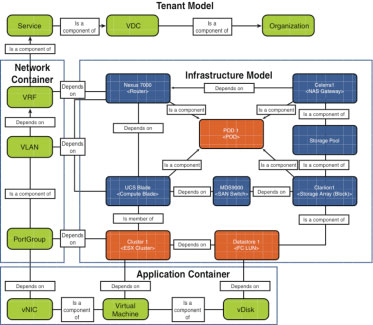

- From a service assurance perspective, the ability to understand the impact of a physical failure on a service or vice versa is critical. Figure 11-4 provides an example of the relationship between a service, the concrete building blocks that make up the service, and the physical building blocks that support the technical building blocks.

One important point to note is that we introduced the concept of a network container in the tenant model. A network container represents all the building blocks used to create the logical network, the topology-related building blocks. A network topology can be complex and can potentially contain many different resources, so using a container to group these elements simplifies the provisioning process.

Figure 11-4 Tenant Model

The following points provide a summary of the relationships shown in Figure 11-4:

- The virtual machine (VM) contains a virtual disk (vDisk) and a virtual network interface card (vNIC). The VM is dependent on the ESX cluster, which in turn is dependent on the physical server. It is now possible to determine the impact on a service if a physical blade fails, even if it simply means that the service is just degraded.

- The vDisk depends on the data store in which it is stored. The vNIC depends on the port group to provide virtual connectivity, which in turn contains a VLAN that depends on both the UCS blade and Nexus 7000 to provide the Layer 2 physical connectivity. The VLAN depends on Virtual Routing and Forwarding (VRF) to provide Layer 3 physical connectivity. In the case of a physical failure of the Nexus, we can determine the impact on the service.

- The VRF is contained in a service, which is contained in a virtual data center (VDC), which is contained in an organization. These relationships mean that it is possible to understand the impact of any physical failure at a service or organization level and, when modifying or deleting the service, what the logical and physical impacts will be.

- The VRF, VLAN, and PortGroup are grouped in a simple network container that can be considered a collection of networking infrastructure, can be created by a network designer, and is part of the overall service.

- The VM, vNIC, and VDisk are grouped in a simple application container that can be considered a collection of application infrastructure, can be created by an application designer, and is part of the overall service.

Hopefully, you can begin to see that there can be up to five separate provisioning tasks to create the model shown in Figure 11-4:

- The cloud provider infrastructure administrator provisions the infrastructure model into the CMDB or service inventory as new physical equipment is added.

- The cloud provider customer administrator creates the outline tenant model by creating the organizational entity and the VDC.

- The tenant service owner creates the service entity.

- The network designer creates the network container to support the application and attaches it to the service.

- The application designer creates the application container to support the application user's needs, connects it to the network resources created by the network designer, and attaches it to the service.

Modeling Capabilities

Modeling capabilities are an important step when on-boarding resources as they have a direct impact on how that resource can be used in the provisioning process. If you look at the Vblock definition again, you can see that it will support Layer 2 and Layer 3 network connectivity as well as NAS and SAN storage and the ESX hypervisor, so you can already see that it won't support the majority of the design patterns discussed earlier as they require a load balancer. If you were looking for a POD to support the instantiation of a design pattern that required a load balancer, you could query all the PODs that had been on-boarded and look for a Load-Balancing=yes capability. If this capability doesn’t exist in any POD in the cloud infrastructure, the cloud provider infrastructure administrator would need to create a new POD with a load balancer or add a load balancer to an existing POD and update the capabilities supported by that POD.

Taking this concept further, if you configure the EMC storage in the Vblock to support several tiers of storage—Gold, Silver, and Bronze, for example—you could simply model these capabilities at the POD level and (as you will see in the next chapter) do an initial check when provisioning a service to find the POD that supports a particular tier of storage. Capabilities can also be used to drive behavior during the lifetime of the service. For example, if you want to allow a tenant the ability to reboot a resource, you can add a reboot=true capability, so this could be added to the class in the data model that represents a virtual machine. You probably wouldn't want to add this capability to a storage array or network device as rebooting one of these resources would affect multiple users and should only be done by the cloud operations team.

However, if a storage array supported a data protection capability such as NetApp Snapshot, a snapshot=true capability could be modeled at the storage array as well as the hypervisor level, allowing a tenant to choose whether he wants to snapshot at the hypervisor or storage level. The provider could offer these two options at different prices, depending on the resources they consume or the cost associated with automating this functionality.

Modeling Constraints

Modeling constraints are another important aspect of the on-boarding process. No resource is infinite, storage gets consumed, and memory gets exhausted, so it is important to establish where the limits are. Within a Layer 2 domain, for example, you only have 4096 VLANs to work with; in reality, after you factor in all infrastructure connectivity per POD, you will have significantly fewer VLANs to work with. So adding these limits to the resource and tracking usage against these limits give the provisioning processes a quick and easy way of checking capacity. Constraint modeling is no replacement for strong capability management tools and processes, but it is a lightweight way of delivering a quick view of where the limits are for a specific resource.

Resource-Aware Infrastructure

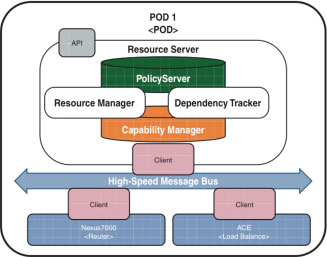

Modeling capabilities and constraints in the service inventory or CMDB are needed as these repositories act as the single source of truth for the infrastructure. As discussed in previous chapters, these repositories are not necessarily the best places to store dynamic data. One alternative method is the concept of an infrastructure that is self-aware that understands what devices exist within a POD, how the devices relate to each other, what capabilities those devices have, and what constraints and loads those devices have. This concept will be addressed further in the next chapter, but it is certainly a more scalable way of understanding the infrastructure model. These relationships could still be modeled in the CMDB, but the next step is simply to relate the tenant service to a POD and let the POD worry about placement and resource management. Figure 11-5 illustrates the components of a resource-aware infrastructure.

Figure 11-5 Resource-Aware Infrastructure

The following points provide a more detailed explanation of the components shown in Figure 11-5:

- A high-speed message bus, such as Extensible Messaging and Presence Protocol (XMPP), is used to connect clients running in the devices with a resource server responsible for a specific POD.

- The resource server persists policy and capabilities in a set of repositories that are updated by the clients.

- The resource server tracks dependencies and resource utilization within the POD.

- The resource manager implements an API that allows the orchestration system to make placement decisions and reservation requests without querying the CMDB or service inventory.

The last point is one of the most critical. Offloading the real-time resource management, constraint, and capabilities modeling from the CMDB/service inventory to the infrastructure will mean a significant simplification of the infrastructure modeling is needed going forward.