Intelligent Path Control Principles

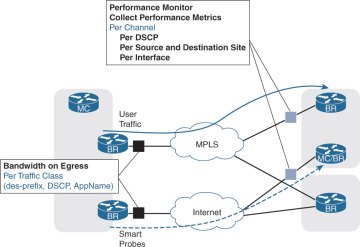

PfR is able to provide intelligent path control and visibility into applications by integrating with the Cisco Performance Monitoring Agent available on the WAN edge (BR) routers. Performance metrics are passively collected based on user traffic and include bandwidth, one-way delay, jitter, and loss.

PfR Policies

PfR policies are global to the IWAN domain and are configured on the Hub MC, then distributed to all MCs via the IWAN peering system. Policies can be defined per DSCP or per application name.

Branch and Transit MCs also receive the Cisco Performance Monitor instance definition, and they can instruct the local BRs to configure Performance Monitors over the WAN interfaces with the appropriate thresholds.

PfR policies are divided into three main groups:

Administrative policies: These policies define path preference definition, path of last resort, and zero SLA used to minimize control traffic on a metered interface.

Performance policies: These policies define thresholds for delay, loss, and jitter for user-defined DSCP values or application names.

Load-balancing policy: Load balancing can be enabled or disabled globally, or it can be enabled for specific network tunnels. In addition, load balancing can provide specific path preference (for example, the primary path can be INET01 and INET02 with a fallback of MPLS01 and MPLS02).

Site Discovery

PfRv3 was designed to simplify the configuration and deployment of branch sites. The configuration is kept to a minimum and includes the IP address of the Hub MC. All MCs connect to the Hub MC in a hub-and-spoke topology.

When a Branch or Transit MC starts:

It uses the loopback address of the local MC as its site ID.

It registers with the Hub MC, providing its site ID, then starts building the IWAN peering with the Hub MC to get all information needed to perform path control. That includes policies and Performance Monitor definitions.

The Hub MC advertises the site ID information for all sites to all its Branch or Transit MC clients.

At the end of this phase, all MCs have a site prefix database that contains the site ID for every site in the IWAN domain.

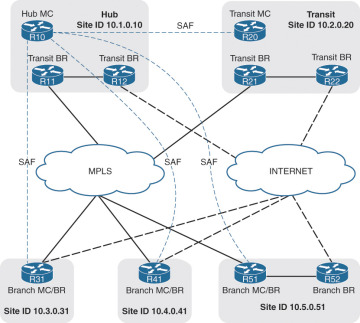

Figure 7-8 shows the IWAN peering between all MCs and the Hub MC. R10 is the Hub MC for this topology. R20, R31, R41, and R51 peer with R10. This is the initial phase for site discovery.

Figure 7-8 Demonstration of IWAN Peering to the Domain Controller

Site Prefix Database

PfR maintains a topology that contains all the network prefixes and their associated site IDs. A site prefix is the combination of a network and the site ID for the network prefix attached to that router. The PfR topology table is known as the site prefix database and is a vital component of PfR. The site prefix database resides on the MCs and BRs. The site prefix database located on the MC learns and manages the site prefixes and their origins from both local egress flow and advertisements from remote MC peers. The site prefix database located at a BR learns/manages the site prefixes and their origins only from the advertisements from remote peers. The site prefix database is organized as a longest prefix matching tree for efficient search.

Table 7-2 provides the site prefix database on all MCs and BRs for the IWAN domain shown in Figure 7-8. It provides a mapping between a destination prefix and a destination site.

Table 7-2 Site Prefix Database for an IWAN Domain

Site Name |

Site Identifier |

Site Prefix |

Site 1 |

10.1.0.10 |

10.1.0.0/16 |

Site 1 |

10.1.0.10 |

172.16.1.0/24 |

Site 2 |

10.2.0.20 |

10.2.0.0/16 |

Site 3 |

10.2.0.31 |

10.3.3.0/24 |

Site 4 |

10.4.0.41 |

10.4.4.0/24 |

Site 5 |

10.5.0.51 |

10.5.5.0/24 |

In order to learn from advertisements via the peering infrastructure from remote peers, every MC and BR subscribes to the site prefix subservice of the PfR peering service. MCs publish and receive site prefixes. BRs only receive site prefixes. An MC publishes the list of site prefixes learned from local egress flows by encoding the site prefixes and their origins into a message. This message can be received by all the other MCs and BRs that subscribe to the peering service. The message is then decoded and added to the site prefix databases at those MCs and BRs. Site prefixes will be explained in more detail in Chapter 8, “PfR Provisioning.”

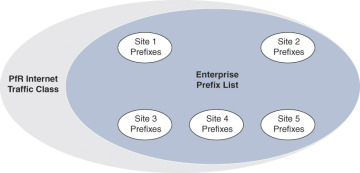

PfR Enterprise Prefixes

The enterprise-prefix prefix list defines the boundary for all the internal enterprise prefixes. A prefix that is not from the enterprise-prefix prefix list is considered a PfR Internet prefix. PfR does not monitor performance (delay, jitter, byte loss, or packet loss) for network traffic.

In Figure 7-9, all the network prefixes for remote sites (Sites 3, 4, and 5) have been dynamically learned. The central sites (Site 1 and Site 2) have been statically configured. The enterprise-prefix prefix list has been configured to include all the network prefixes in each of the sites so that PfR can monitor performance.

Figure 7-9 PfR Site and Enterprise Prefixes

WAN Interface Discovery

Border router WAN interfaces are connected to different SPs and have to be defined or discovered by PfR. This definition creates the relationship between the SPs and the administrative policies based on the path name in PfR. A typical example is to define an MPLS-VPN path as the preferred one for all business applications and the Internet-based path as a fallback path when there is a performance issue on the primary.

Hub and Transit Sites

In a PfR domain, a path name and a path identifier need to be configured for every WAN interface (DMVPN tunnel) on the hub site and all transit sites:

The path name uniquely identifies a transport network. For example, this book uses a primary transport network called MPLS for the MPLS-based transport and a secondary transport network called INET for the Internet-based transport.

The path identifier uniquely identifies a path on a site. This book uses path-id 1 for DMVPN tunnel 100 connected to MPLS and path-id 2 for tunnel 200 connected to INET.

IWAN supports multiple BRs for the same DMVPN network on the hub and transit sites only. The path identifier has been introduced in PfR to be able to track every BR individually.

Every BR on a hub or transit site periodically sends a discovery packet with path information to every discovered site. The discovery packets are created with the following default parameters:

Source IP address: Local MC IP address

Destination IP address: Remote site ID (remote MC IP address)

Source port: 18000

Destination port: 19000

Branch Sites

WAN interfaces are automatically discovered on Branch BRs. There is no need to configure the transport names over the WAN interfaces.

When a BR on a branch site receives a discovery probe from a central site (hub or transit site):

It extracts the path name and path identifier information from the probe payload.

It stores the mapping between the WAN interface and the path name.

It sends the interface name, path name, and path identifier information to the local MC.

The local MC knows that a new WAN interface is available and also knows that a BR is available on that path with the path identifier.

The BR associates the tunnel with the correct path information, enables the Performance Monitors, collects performance metrics, collects site prefix information, and identifies traffic that can be controlled.

This discovery process simplifies the deployment of PfR.

Channel

Channels are logical entities used to measure path performance per DSCP between two sites. A channel is created based on real traffic observed on BRs and is based upon a unique combination of factors such as interface, site, next hop, and path. Channels are based on real user traffic or synthetic traffic generated by the BRs called smart probes. A channel is added every time a new DSCP, interface, or site is added to the prefix database or when a new smart probe is received. A channel is a logical construct in PfR and is used to keep track of next-hop reachability and collect the performance metrics per DSCP.

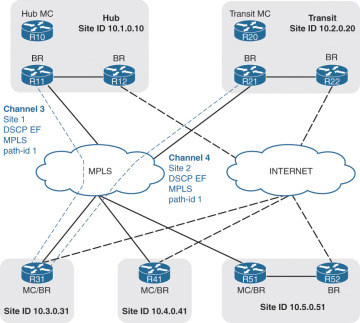

Figure 7-10 illustrates the channel creation over the MPLS path for DSCP EF. Every channel is used to track the next-hop availability and collect the performance metrics for the associated DSCP and destination site.

Figure 7-10 Channel Creation for Monitoring Performance Metrics

When a channel needs to be created on a path, PfR creates corresponding channels for any alternative paths to the same destination. This allows PfR to keep track of the performance for the destination prefix and DSCP for every DMVPN network. Channels are deemed active or standby based on the routing decisions and PfR policies.

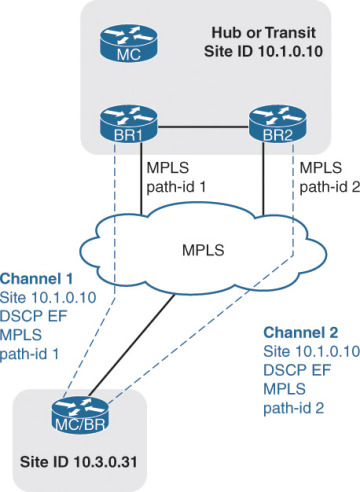

Multiple BRs can sit on a hub or transit site connected to the same DMVPN network. DMVPN hub routers function as NHRP NHSs for DMVPN and are the BRs for PfR. PfR supports multiple next-hop addresses for hub and transit sites only but limits each of the BRs to hosting only one DMVPN tunnel. This limitation is overcome by placing multiple BRs into a hub or transit site.

The combination of multiple next hops and transit sites creates a high level of availability. A destination prefix can be available across multiple central sites and multiple BRs. For example, if a next hop connected on the preferred path DMVPN tunnel 100 (MPLS) experiences delays, PfR is able to fail over to the other next hop available for DMVPN tunnel 100 that is connected to a different router. This avoids failing over to a less preferred path using DMVPN tunnel 200, which uses the Internet as a transport.

Figure 7-11 illustrates a branch with DSCP EF packets flowing to a hub or transit site that has two BRs connected to the MPLS DMVPN tunnel. Each path has the same path name (MPLS) and a unique path identifier (path-id 1 and path-id 2). If BR1 experiences performance issues, PfR fails over the affected traffic to BR2 over the same preferred path MPLS.

Figure 7-11 Channels per Next Hop

A parent route lookup is done during channel creation. PfR first checks to see if there is an NHRP shortcut route available; if not, it then checks for parent routes in the order of BGP, EIGRP, static, and RIB. If at any point an NHRP shortcut route appears, PfR selects that and relinquishes using the parent route from one of the routing protocols. This behavior allows PfR to dynamically measure and utilize DMVPN shortcut paths to protect site-to-site traffic according to the defined polices as well.

A channel is deemed reachable if the following happens:

Traffic is received from the remote site.

An unreachable event is not received for two monitor intervals.

A channel is declared unreachable in both directions in the following circumstances:

No packets are received since the last unreachable time from the peer, as detected by the BR. This unreachable timer is defined as one second by default and can be tuned if needed.

The MC receives an unreachable event from a remote BR. The MC notifies the local BR to make the channel unreachable.

When a channel becomes unreachable, it is processed through the threshold crossing alert (TCA) messages, which will be described later in the chapter.

Smart Probes

Smart probes are synthetic packets that are generated from a BR and are primarily used for WAN interface discovery, delay calculation, and performance metric collection for standby channels. This synthetic traffic is generated only when real traffic is not present, except for periodic packets for one-way-delay measurement. The probes (RTP packets) are sent over the channels to the sites that have been discovered.

Controlled traffic is sent at periodic intervals:

Periodic probes: Periodic packets are sent to compute one-way delay. These probes are sent at regular intervals whether actual traffic is present or not. By default this is one-third of the monitoring interval (the default is 30 seconds), so by default periodic probes are sent every 10 seconds.

On-demand probes: These packets are sent only when there is no traffic on a channel. Twenty packets per second are generated per channel. As soon as user traffic is detected on a channel, the BR stops sending on-demand probes.

Traffic Class

PfR manages aggregations of flows called traffic classes (TCs). A traffic class is an aggregation of flows going to the same destination prefix, with the same DSCP or application name (if application-based policies are used).

Traffic classes are learned on the BR by monitoring a WAN interface’s egress traffic. This is based on a Performance Monitor instance applied on the external interface.

Traffic classes are divided into two groups:

Performance TCs: These are any TCs with performance metrics defined (delay, loss, jitter).

Non-performance TCs: The default group, these are the TCs that do not have any defined performance metrics (delay, loss, jitter), that is, TCs that do have any match statements in the policy definition on the Hub MC.

For every TC, the PfR route control maintains a list of active channels (current exits) and standby channels.

Path Selection

Path and next-hop selection in PfR depends on the routing design in conjunction with the PfR policies. From a central site (hub and transit) to a branch site, there is only one possible next hop per path. From a branch site to a central site, multiple next hops can be available and may span multiple sites. PfR has to make a choice among all next hops available to reach the destination prefix of the traffic to control.

Direction from Central Sites (Hub and Transit) to Spokes

Each central site is a distinct site by itself and controls only traffic toward the spoke on the WAN paths to that site. PfR does not redirect traffic between central sites across the DCI or WAN core to reach a remote site. If the WAN design requires that all the links be considered from POP to spoke, use a single MC to control all BRs from both central sites.

Direction from Spoke to Central Sites (Hub and Transit)

The path selection from BR to a central site router can vary based on the overall network design. The following sections provide more information on PfR’s path selection process.

Active/Standby Next Hop

The spoke considers all the paths (multiple next hops) toward the central sites and maintains a list of active/standby candidate next hops per prefix and interface. The concept of active and standby next hops is based on the routing best metric to gather information about the preferred POP for a given prefix. If the best metric for a given prefix is on a specific central site, all the next hops on that site for all the paths are tagged as active (only for that prefix). A next hop in a given list is considered to have a best metric based on the following metrics/criteria:

Advertised mask length

BGP weight and local preference

EIGRP feasible distance (FD) and successor FD

Transit Site Affinity

Transit Site Affinity (also called POP Preference) is used in the context of a multiple-transit-site deployment with the same set of prefixes advertised from multiple central sites. Some branches prefer a specific transit site over the other sites. The affinity of a branch to a transit site is configured by altering the routing metrics for prefix advertisements to the branch from the transit site. If one of the central sites advertising a specific prefix has the best next hop, the entire site is preferred over the other sites for all TCs to this destination prefix. Transit site preference is a higher-priority filter and takes precedence over path preference. The Transit Site Affinity feature was introduced in Cisco IWAN 2.1.

Path Preference

During Policy Decision Point (PDP), the exits are first sorted on the available bandwidth, Transit Site Affinity, and then a third sort algorithm that places all primary path preferences in the front of the list followed by fallback preferences. A common deployment use case is to define a primary path (MPLS) and a fallback path (INET). During PDP, MPLS is selected as the primary channel, and if INET is within policy it is selected as the fallback.

With path preference configured, PfR first considers all the links belonging to the preferred path (that is, it includes the active and the standby links belonging to thepreferred path) and then uses the fallback provider links.

Without path preference configured, PfR gives preference to the active channels and then the standby channels (active/standby is per prefix) with respect to the performance and policy decisions.

Transit Site Affinity and Path Preference Usage

Transit Site Affinity and path preference are used in combination to influence the next-hop selection per TC. For example, this book uses a topology with two central sites (Site 1 and Site 2) and two paths (MPLS and INET). Both central sites advertise the same prefix (10.10.0.0/16 as an example), and Site 1 has the best next hop for that prefix (R11 advertises 10.10.0.0/16 with the highest BGP local preference). Enabling Transit Site Affinity and defining a path preference with MPLS as the primary and INET as the fallback path, the BR identifies the following routers (in order) for the next hop:

R11 is the primary next hop for TCs with 10.10.0.0/16 as the destination prefix

Then R12 (same site, because of Transit Site Affinity)

Then R21 (Site 2, because of path preference)

Then R22

Performance Monitoring

The PfR monitoring system interacts with the IOS component called Performance Monitor to achieve the following tasks:

Learning site prefixes and applications

Collecting and analyzing performance metrics per DSCP

Generating threshold crossing alerts

Generating out-of-policy report

Performance Monitor is a common infrastructure within Cisco IOS that passively collects performance metrics, number of packets, number of bytes, statistics, and more within the router. In addition, Performance Monitor organizes the metrics, formats them, and makes the information accessible and presentable based upon user needs. Performance Monitor provides a central repository for other components to access these metrics.

PfR is a client of Performance Monitor, and through the performance monitoring metrics, PfR builds a database from that information and uses it to make an appropriate path decision. When a BR component is enabled on a device, PfR configures and activates three Performance Monitor instances (PMIs) over all discovered WAN interfaces of branch sites, or over all configured WAN interfaces of hub or transit sites. Enablement of PMI on these interfaces is dynamic and completely automated by PfR. This configuration does not appear in the startup or running configuration file.

The PMIs are

Monitor 1: Site prefix learning (egress direction)

Monitor 2: Egress aggregate bandwidth per traffic class

Monitor 3: Performance measurements (ingress direction)

Monitor 3 contains two monitors: one dedicated to the business and media applications where failover time is critical (called quick monitor), and one allocated to the default traffic.

PfR policies are applied either to an application definition or to DSCP. Performance is measured only per DSCP because SPs can differentiate traffic only based on DSCP and not based on application.

Performance is measured between two sites where there is user traffic. This could be between hub and a spoke, or between two spokes; the mechanism remains the same.

The egress aggregate monitor instance captures the number of bytes and packets per TC on egress on the source site. This provides the bandwidth utilization per TC.

The ingress per DSCP monitor instance collects the performance metrics per DSCP (channel) on ingress on the destination site. Policies are applied to either application or DSCP. However, performance is measured per DSCP because SPs differentiate traffic only based on DSCP and not based on discovered application definitions. All TCs that have the same DSCP value get the same QoS treatment from the provider, and therefore there is no real need to collect performance metrics per application-based TC.

PfR passively collects metrics based on real user traffic and collects metrics on alternative paths too. The source MC then instructs the BR connected to the secondary paths to generate smart probes to the destination site. The PMI on the remote site collects statistics in the same way it would for actual user traffic. Thus, the health of a secondary path is known prior to being used for application traffic, and PfR can choose the best of the available paths on an application or DSCP basis.

Figure 7-12 illustrates PfR performance measurement with network traffic flowing from left to right. On the ingress BRs (BRs on the right), PfR monitors performance per channel. On the egress BRs (BRs on the left), PfR collects the bandwidth per TC. Metrics are collected from the user traffic on the active path and based on smart probes on the standby paths.

Figure 7-12 PfR Performance Measurement via Performance Monitor

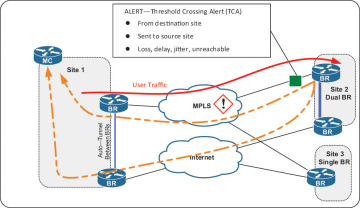

Threshold Crossing Alert (TCA)

Threshold crossing alert (TCA) notifications are alerts for when network traffic exceeds a set threshold for a specific PfR policy. TCAs are generated from the PMI attached to the BR’s ingress WAN interfaces and smart probes. Figure 7-13 displays a TCA being raised on the destination BR.

Figure 7-13 Threshold Crossing Alert (TCA)

Threshold crossing alerts are managed on both the destination BR and source MC for the following scenarios:

The destination BR receives performance TCA notifications from the PMI, which monitors the ingress traffic statistics and reports TCA alerts when threshold crossing events occur.

The BR forwards the performance TCA notifications to the MC on the source site that actually generates the traffic. This source MC is selected from the site prefix database based on the source prefix of the traffic. TCA notifications are transmitted via multiple paths for reliable delivery.

The source MC receives the TCA notifications from the destination BR and translates the TCA notifications (that contain performance statistics) to an out-of-policy (OOP) event for the corresponding channel.

The source MC waits for the TCA processing delay time for all the notifications to arrive, then starts processing the TCA. The processing involves selecting TCs that are affected by the TCA and moving them to an alternative path.

Path Enforcement

PfR uses the Route Control Enforcement module for optimal traffic redirection and path enforcement. This module performs lookups and reroutes traffic similarly to policy-based routing but without using an ACL. The MC makes path decisions for every unique TC. The MC picks the next hop for a TC’s path and instructs the local BR how to forward packets within that TC.

Because of how path enforcement is implemented, the next hop has to be directly connected to each BR. When there are multiple BRs on a site, PfR sets up an mGRE tunnel between all of them to accommodate path enforcement. Every time a WAN exit point is discovered or an up/down interface notification is sent to the MC, the MC sends this notification to all other BRs in the site. An endpoint is added to the mGRE tunnel pointing toward this BR as a result.

When packets are received on the LAN side of a BR, the route control functionality determines if it must exit via a local WAN interface or via another BR. If the next hop is via another BR, the packet is sent out on the tunnel toward that BR. Thus the packet arrives at the destination BR within the same site. Route control gets the packet, looks at the channel identifier, and selects the outgoing interface. The packet is then sent out of this interface across the WAN.