Understanding the HA Options Available

There are many different items to note when it comes to high availability (HA) within a Secure Access deployment. There are the concerns of communication between the PANs and the other ISE nodes for database replications and synchronization, and communication between the PSNs and Monitoring nodes for logging. There is also the issue of authentication sessions from the network access devices (NAD) reaching the PSNs in the event of a WAN outage, as well as a NAD recognizing that a PSN may no longer be active, and sending authentication requests to the active PSN instead.

Primary and Secondary Nodes

PANs and Monitoring & Troubleshooting (MnT) nodes both employ the concept of primary and secondary nodes, but they operate very differently. Let’s start with the easiest one first, the MnT node.

Monitoring & Troubleshooting Nodes

As you know, the MnT node is responsible for the logging and reporting functions of ISE. All PSNs will send their logging data to the MnT node as syslog messages (UDP port 20514).

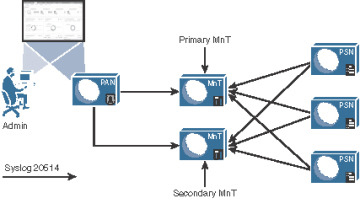

When there are two monitoring nodes in an ISE deployment, all ISE nodes send their audit data to both monitoring nodes at the same time. Figure 18-9 displays this logging flow.

Figure 18-9 Logging Flows

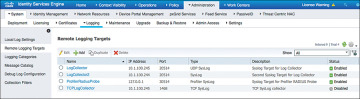

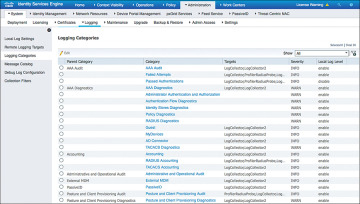

The active/active nature of the MnT nodes can be viewed easily in the administrative console, as the two MnTs get defined as LogCollector and LogCollector2. Figures 18-10 and 18-11 display the log collector definitions and the logging categories, respectively.

Figure 18-10 Logging Targets

Figure 18-11 Logging Categories

Upon an MnT failure, all nodes continue to send logs to the remaining MnT node. Therefore, no logs are lost. The PAN retrieves all log and report data from the secondary MnT node, so there is no administrative function loss, either. However, the log database is not synchronized between the primary and secondary MnT nodes. Therefore, when the MnT node returns to service, a backup and restore of the monitoring node is required to keep the two MnT nodes in complete sync.

Policy Administration Nodes

The PAN is responsible for providing not only an administrative GUI for ISE but also the critical function of database synchronization of all ISE nodes. All ISE nodes maintain a full copy of the database, with the master database existing on the primary PAN.

A PSN may receive data about a guest user, and when that occurs it must sync that data to the primary PAN. The primary PAN then synchronizes that data out to all the ISE nodes in the deployment.

Because the functionality is so arduous, and having only a single source of truth for the data in the database is so critical, failing over to the secondary PAN is usually a manual process. In the event of the primary PAN going offline, no synchronizations occur until the secondary PAN is promoted to primary. Once it becomes the primary, it takes over all synchronization responsibility. This is sometimes referred to as a “warm spare” type of HA.

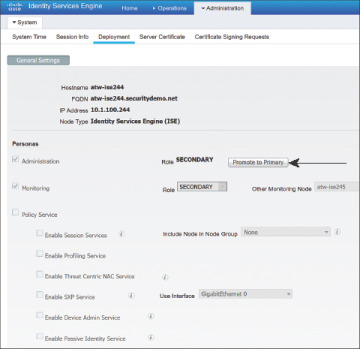

Promote the Secondary PAN to Primary

To promote the secondary PAN to primary, connect to the GUI on the secondary PAN and perform the following steps:

Step 1. Choose Administration > System > Deployment.

Step 2. Click Promote to Primary. Figure 18-12 illustrates the Promote to Primary option available on the secondary node.

Figure 18-12 Promoting a Secondary PAN to Primary

Auto PAN Failover

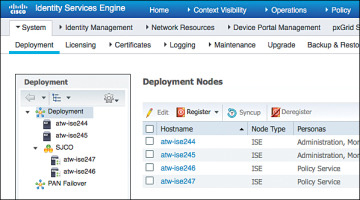

An automated promotion function was added to ISE beginning with version 1.4. It requires there to be two admin nodes (obviously) and at least one other non-admin node in the deployment.

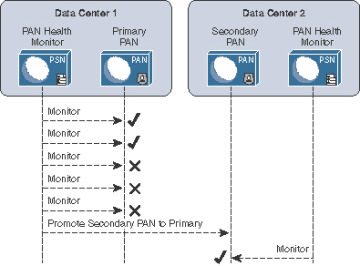

The non-admin node will act as a health check function for the admin node(s), probing the primary admin node at specified intervals. The Health Check Node will promote the secondary admin node when the primary fails a configurable number of probes. Once the original secondary node is promoted, it is probed. Figure 18-13 illustrates the process.

Figure 18-13 Promoting a Secondary PAN to Primary with Automated Promotion

As of ISE version 2.1, there is no ability to automatically sync the original primary PAN back into the ISE cube. That is still a manual process.

Configure Automatic Failover for the Primary PAN

For the configuration to be available, there must be two PANs and at least one non-PAN in the deployment.

From the ISE GUI, perform the following steps:

Step 1. Navigate to Administration > System > Deployment.

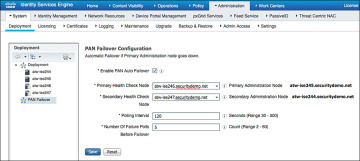

Step 2. Click PAN Failover in the left pane, as shown in Figure 18-14.

Figure 18-14 PAN Failover

Step 3. Check the Enable PAN Auto Failover check box.

Step 4. Select the Health Check Nodes from the drop-down lists. Notice the primary PAN and secondary are listed to the right of the selected Health Check Nodes, as shown in Figure 18-14.

Step 5. In the Polling Interval field, set the polling interval. The interval is in seconds and can be set between 30 and 300 (5 minutes).

Step 6. In the Number of Failure Polls Before Failover field, enter the number of failed probes that have to occur before failover is initiated. Valid range is anywhere from 2–60 consecutive failed probes.

Step 7. Click Save.

Policy Service Nodes and Node Groups

PSNs do not necessarily need to have an HA type of configuration. Every ISE node maintains a full copy of the database, and the NADs have their own detection of a “dead” RADIUS server, which triggers the NAD to send AAA communication to the next RADIUS server in the list.

However, ISE has the concept of a node group. Node groups are made up of PSNs, where the PSNs maintain a heartbeat with each other. Beginning with ISE 1.3, the PSNs can be in different subnets or can be Layer 2 adjacent. In older ISE versions, the PSNs required the use of multicast, but starting in version 1.3 they use direct encrypted TCP-based communication instead:

TCP/7800: Used for peer communication

TCP/7802: Used for failure detection

If a PSN goes down and orphans a URL-redirected session, one of the other PSNs in the node group sends a Change of Authorization (CoA) to the NAD so that the endpoint can restart the session establishment with a new PSN.

Node groups do have another function, which is entirely related to data replication. ISE used a serial replication model in ISE 1.0, 1.1, and 1.1.x, meaning that all data had to go through the primary PAN and it sent the data objects to every other node, waiting for an acknowledgement for each piece of data before sending the next one in line.

Beginning with ISE 1.2 and moving forward, ISE begins to use a common replication framework known as JGroups (http://bfy.tw/5vYC). One of the benefits of JGroups is the way it handles replications in a group or segmented fashion. JGroups enables replications with local peers directly without having to go back through a centralized master, and node groups are used to define those segments or groups of peers.

So, when a member of a node group learns endpoint attributes (profiling), it is able to send the information directly to the other members of the node group directly. However, when that data needs to be replicated globally (to all PSNs), then the JGroups communication must still go through the primary PAN, which in turn replicates it to all the other PSNs.

Node groups are most commonly used when deploying the PSNs behind a load balancer; however, there is no reason node groups could not be used with regionally located PSNs. You would not want to use a node group with PSNs that are geographically and logically separate.

Create a Node Group

To create a node group, from the ISE GUI, perform the following steps:

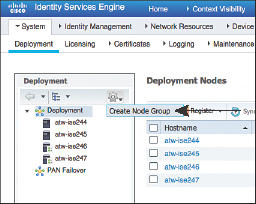

Step 1. Choose Administration > System > Deployment.

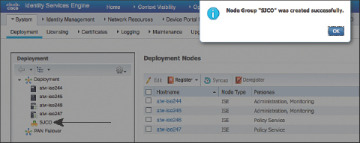

Step 2. In the Deployment pane on the left side of the screen, click the cog icon and choose Create Node Group, as shown in Figure 18-15.

Figure 18-15 Choosing to Create a Node Group

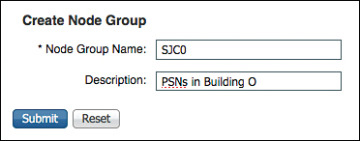

Step 3. On the Create Node Group screen, shown in Figure 18-16, enter in the Node Group Name field a name for the node group. Use a name that also helps describe the location of the group. In this example, SJCO was used to represent San Jose, Building O.

Figure 18-16 Node Group Creation

Step 4. (Optional) In the Description field, enter a more detailed description that helps to identify exactly where the node group is (for example, PSNs in Building O). Click Submit.

Step 5. Click OK in the success popup window, as shown in Figure 18-17. Also notice the appearance of the node group in the left pane.

Figure 18-17 Success Popup

Add the Policy Service Nodes to the Node Group

To add the PSNs to the node group, from the ISE GUI, perform the following steps:

Step 1. Choose Administration > System > Deployment.

Step 2. Select one of the PSNs to add to the node group.

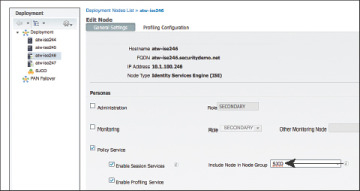

Step 3. Click the Include Node in Node Group drop-down arrow and select the newly created group, as shown in Figure 18-18.

Figure 18-18 Assigning a Node Group

Step 4. Click Save.

Step 5. Repeat the preceding steps for each PSN that should be part of the node group.

Figure 18-19 shows the reorganization of the PSNs within the node group in the Deployment navigation pane on the left side.

Figure 18-19 Reorganized Deployment Navigation Pane

Using Load Balancers

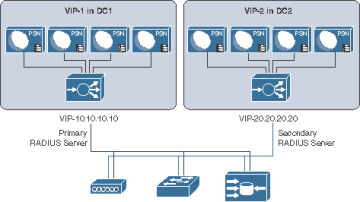

One high-availability option that is growing in popularity for Cisco ISE deployments is the use of load balancers. Load balancer adoption with ISE deployments has skyrocketed over the years because it can significantly simplify administration and designs in larger deployments. As Figure 18-20 illustrates, with load balancing, the NADs have to be configured with only one IP address per set of ISE PSNs, removing a lot of the complexity in the NAD configuration. The load balancer itself takes care of monitoring the ISE PSNs and removing them from service if they are down and allows you to scale more nodes behind the virtual IP (VIP) without ever touching the network device configuration again.

Figure 18-20 Load-Balanced PSN Clusters

Craig Hyps, a Principal Technical Marketing Engineer for ISE at Cisco, has written what is considered to be the definitive guide on load balancing with ISE, “How To: Cisco & F5 Deployment Guide: ISE Load Balancing Using BIG-IP.” Craig wrote the guide based on using F5 load balancers, but the principles are identical regardless of which load balancer you choose to implement. You can find his guide here: https://communities.cisco.com/docs/DOC-68198.

Instead of replicating that entire large and detailed guide in this chapter, this section simply focuses on the basic principles that must be followed when using ISE with load balancers.

General Guidelines

When using a load balancer, you must ensure the following:

Each PSN must be reachable by the PAN/MnT directly, without having to go through Network Address Translation (NAT). This sometimes is referred to as routed mode or pass-through mode.

Each PSN must also be reachable directly from the endpoint.

When the PSN sends a URL-Redirection to the NAD, it uses the fully qualified domain name (FQDN) from the configuration, not the virtual IP (VIP) address.

You might want to use Subject Alternative Names (SAN) in the certificate to include the FQDN of the load-balancer VIP.

The same PSN is used for the entire session. User persistence, sometimes called needs to be based on Calling-Station-ID.

The VIP gets listed as the RADIUS server of each NAD for all 802.1X-related AAA.

Includes both authentication and accounting packets.

Some load balancers use a separate VIP for each protocol type.

The list of RADIUS servers allowed to perform dynamic-authorizations (also known as Change of Authorization [CoA]) on the NAD should use the real IP addresses of the PSNs, not the VIP.

The VIP could be used for the CoAs, if the load balancer is performing source NAT (SNAT) for the CoAs sent from the PSNs.

Load balancers should be configured to use test probes to ensure the PSNs are still “alive and well.”

A probe should be configured to ensure RADIUS is responding.

HTTPS should also be checked.

If either probe fails, the PSN should be taken out of service.

A PSN must be marked dead and taken out of service in the load balancer before the NAD’s built-in failover occurs.

Since the load balancer(s) should be configured to perform health checks of the RADIUS service on the PSN(s), the load balancer(s) must be configured as NADs in ISE so their test authentications may be answered correctly.

Failure Scenarios

If a single PSN fails, the load balancer takes that PSN out of service and spreads the load over the remaining PSNs. When the failed PSN is returned to service, the load balancer adds it back into the rotation. By using node groups along with a load balancer, another of the node group members issues a CoA-reauth for any sessions that were establishing. This CoA causes the session to begin again. At this point, the load balancer directs the new authentication to a different PSN.

NADs have some built-in capabilities to detect when the configured RADIUS server is “dead” and automatically fail over to the next RADIUS server configured. When using a load balancer, the RADIUS server IP address is actually the VIP address. So, if the entire VIP is unreachable (for example, the load balancer has died), the NAD should quickly fail over to the next RADIUS server in the list. That RADIUS server could be another VIP in a second data center or another backup RADIUS server.

Anycast HA for ISE PSNs

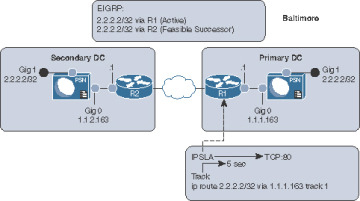

This section exists thanks to a friend of the author who is also one of the most talented and gifted technologists roaming the earth today. E. Pete Karelis, CCIE No. 8068, designed this high-availability solution for a small ISE deployment that had two data centers. Figure 18-21 illustrates the network architecture.

Figure 18-21 Network Drawing and IPSLA

Anycast is a networking technique where the same IP address exists in multiple places within the network. In this case, the same IP address (2.2.2.2) is assigned to the Gig1 interfaces on all the PSNs, which is connected to an isolated VLAN (or port group in VMware), so that the PSN sees the interface as “up” and connected with the assigned IP address (2.2.2.2). Each default gateway (router) in each data center is configured with a static route to 2.2.2.2/32 with the Gig0 IP address of the PSN as the next hop. Those static routes are redistributed into the routing protocol; in this case EIGRP is used. Anycast relies on the routing protocols to ensure that traffic destined to the Anycast address (2.2.2.2) is sent to the closest instance of that IP address.

After setting up Anycast to route 2.2.2.2 to the ISE PSN, Pete used EIGRP metrics to ensure that all routes preferred the primary data center, with the secondary data center route listed as the feasible successor (FS). With EIGRP, there is less than a 1-second delay when a route (the successor) is replaced with the backup route (the feasible successor).

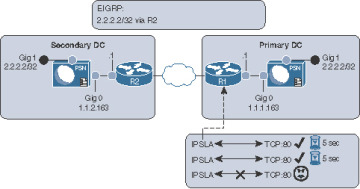

Now, how do we make the successor route drop from the routing table when the ISE node goes down? Pete configured an IP service-level agreement (IPSLA) on the router that checked the status of the HTTP service on the ISE PSN in the data center every 5 seconds. If the HTTP service stops responding on the active ISE PSN, then the route is removed and the FS takes over, causing all the traffic for 2.2.2.2 to be sent to the PSN in the secondary data center. Figure 18-22 illustrates the IPSLA function, and when it occurs the only route left in the routing table is to the router at the secondary data center.

Figure 18-22 IPSLA in Action

All network devices are configured to use the Anycast address (2.2.2.2) as the only RADIUS server in their configuration. The RADIUS requests will always be sent to whichever ISE node is active and closest. Authentications originating within the secondary data center go to the local PSN.

Example 18-2 shows the interface configuration on the ISE PSN. The Gig0 interface is the actual routable IP address of the PSN, while Gig1 is in a VLAN to nowhere using the Anycast IP address.

Example 18-2 ISE Interface Configuration

interface gig 0 !Actual IP of Node ip address 1.1.1.163 255.255.255.0 interface gig 1 !Anycast VIP assigned to all PSN nodes on G1 ip address 2.2.2.2 255.255.255.255 ip default-gateway [Real Gateway for Gig0] !note no static routes needed.

Example 18-3 shows the IPSLA configuration on the router, to test port 80 on the PSN every 5 seconds but to timeout after 1000 msec. When that timeout occurs, the IP SLA object will be marked as “down,” which causes changed object tracking to remove the static route from the route table.

Example 18-3 IPSLA Configuration

ip sla 1

!Test TCP to port 80 to the actual IP of the node.

!"control disable" is necessary, since you are connecting to an

!actual host instead of an SLA responder

tcp-connect 1.1.1.163 80 control disable

! Consider the SLA as down if response takes longer than 1000msec

threshold 1000

! Timeout after 1000 msec.

timeout 1000

!Test every 5 Seconds:

frequency 5

ip sla schedule 1 life forever start-time now

track 1 ip sla 1

ip route 2.2.2.2 255.255.255.255 1.1.1.163 track 1

Example 18-4 shows the route redistribution configuration where the EIGRP metrics are applied. Pete was able to use the metrics that he chose specifically because he was very familiar with his network. His warning to others attempting the same thing is to be familiar with your network or to test thoroughly when identifying the metrics that would work for you.

Remember, you must avoid equal-cost, multiple-path routes, as this state could potentially introduce problems if RADIUS requests are not sticking to a single node. Furthermore, this technique is not limited to only two sites; Pete has since added a third location to the configuration and it works perfectly.

Example 18-4 Route Redistribution

router eigrp [Autonomous-System-Number] redistribute static route-map STATIC-TO-EIGRP route-map STATIC-TO-EIGRP permit 20 match ip address prefix-list ISE_VIP !Set metrics correctly set metric 1000000 1 255 1 1500 ip prefix-list ISE_VIP seq 5 permit 2.2.2.2/32