Multitenant Routing Consideration

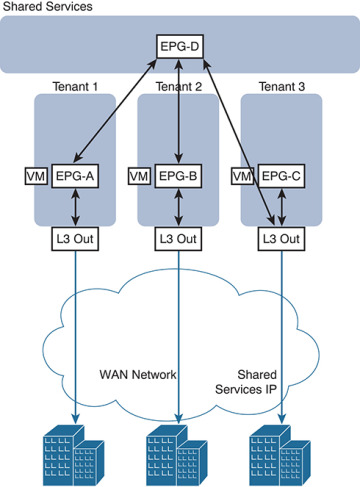

A common requirement of multitenant cloud infrastructures is the capability to provide shared services to hosted tenants. Such services include Active Directory, DNS, and storage. Figure 6-22 illustrates this requirement.

In Figure 6-22, Tenants 1, 2, and 3 have locally connected servers, respectively part of EPGs A, B, and C. Each tenant has an L3 Out connection linking remote branch offices to this data center partition. Remote clients for Tenant 1 need to establish communication with servers connected to EPG A. Servers hosted in EPG A need access to shared services hosted in EPG D in a different tenant. EPG D provides shared services to the servers hosted in EPGs A and B and to the remote users of Tenant 3.

In this design, each tenant has a dedicated L3 Out connection to the remote offices. The subnets of EPG A are announced to the remote offices for Tenant 1, the subnets in EPG B are announced to the remote offices of Tenant 2, and so on. In addition, some of the shared services may be used from the remote offices, as in the case of Tenant 3. In this case, the subnets of EPG D are announced to the remote offices of Tenant 3.

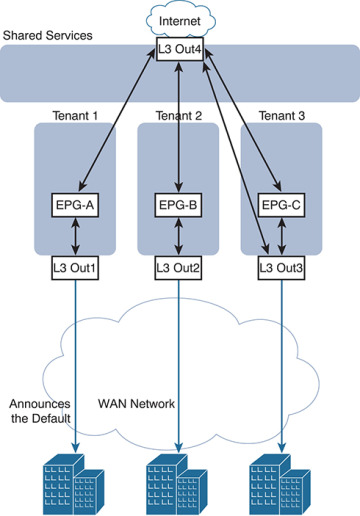

Another common requirement is shared access to the Internet, as shown in Figure 6-23. In the figure, the L3 Out connection of the Shared Services tenant (shown in the figure as “L3Out4”) is shared across Tenants 1, 2, and 3. Remote users may also need to use this L3 Out connection, as in the case of Tenant 3. In this case, remote users can access L3Out4 through Tenant 3.

Figure 6-22 Shared Services Tenant

These requirements can be implemented in several ways:

Use the VRF instance from the common tenant and the bridge domains from each specific tenant.

Use the equivalent of VRF leaking (which in Cisco ACI means configuring the subnet as shared).

Provide shared services with outside routers connected to all tenants.

Provide shared services from the Shared Services tenant by connecting it with external cables to other tenants in the fabric.

The first two options don’t require any additional hardware beyond the Cisco ACI fabric itself. The third option requires external routing devices such as additional Cisco Nexus 9000 Series switches that are not part of the Cisco ACI fabric. If you need to put shared services in a physically separate device, you are likely to use the third option. The fourth option, which is logically equivalent to the third one, uses a tenant as if it were an external router and connects it to the other tenants through loopback cables.

Figure 6-23 Shared L3 Out Connection for Internet Access

Shared Layer 3 Outside Connection

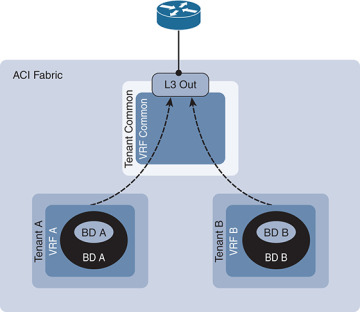

It is a common approach for each tenant and VRF residing in the Cisco ACI fabric to have its own dedicated L3 Out connection; however, an administrator may want to use a single L3 Out connection that can be shared by multiple tenants within the Cisco ACI fabric. This allows a single L3 Out connection to be configured in a single, shared tenant (such as the common tenant), along with other tenants on the system sharing this single connection, as shown in Figure 6-24.

Figure 6-24 Shared L3 Out Connections

A shared L3 Out configuration is similar to the inter-tenant communication discussed in the previous section. The difference is that in this case, the routes are being leaked from the L3 Out connection to the individual tenants, and vice versa. Contracts are provided and consumed between the L3 Out connection in the shared tenant and the EPGs in the individual tenants.

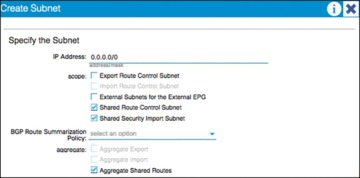

To set up a shared L3 Out connection, you can define the connection as usual in the shared tenant (this tenant can be any tenant, not necessarily the common tenant). The external network should be defined as usual. However, it should be marked with Shared Route Control Subnet and Shared Security Import Subnet. This means that the routing information from this L3 Out connection can be leaked to other tenants, and subnets accessible through this L3 Out connection will be treated as external EPGs for the other tenants sharing the connection (see Figure 6-25).

Further information about these options follows:

Shared Route Control Subnet: This option indicates that this network, if learned from the outside through this VRF, can be leaked to other VRFs (assuming that they have a contract with the external EPG).

Shared Security Import Subnets: This option defines which subnets learned from a shared VRF belong to this external EPG for the purpose of contract filtering when establishing a cross-VRF contract. This configuration matches the external subnet and masks out the VRF to which this external EPG and L3 Out connection belong. This configuration requires that the contract filtering be applied at the border leaf.

Figure 6-25 Shared Route Control and Shared Security Import Subnet Configuration

In the example in Figure 6-25, the Aggregate Shared Routes option is checked. This means that all routes will be marked as shared route control (in other words, all routes will be eligible for advertisement through this shared L3 Out connection).

At the individual tenant level, subnets defined under bridge domains should be marked as both Advertised Externally and Shared Between VRFs, as shown in Figure 6-26.

Figure 6-26 Subnet Scope Options

Transit Routing

The transit routing function in the Cisco ACI fabric enables the advertisement of routing information from one L3 Out connection to another, allowing full IP connectivity between routing domains through the Cisco ACI fabric.

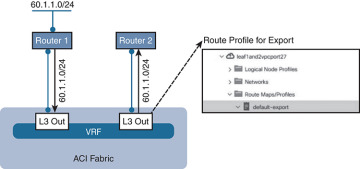

To configure transit routing through the Cisco ACI fabric, you must mark the subnets in question with the Export Route Control option when configuring external networks under the L3 Out configuration. Figure 6-27 shows an example.

Figure 6-27 Export Route Control Operation

In the example in Figure 6-27, the desired outcome is for subnet 60.1.1.0/24 (which has been received from Router 1) to be advertised through the Cisco ACI fabric to Router 2. To achieve this, the 60.1.1.0/24 subnet must be defined on the second L3 Out and marked as an export route control subnet. This will cause the subnet to be redistributed from MP-BGP to the routing protocol in use between the fabric and Router 2.

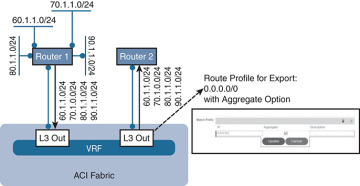

It may not be feasible or scalable to define all possible subnets individually as export route control subnets. It is therefore possible to define an aggregate option that will mark all subnets with export route control. Figure 6-28 shows an example.

Figure 6-28 Aggregate Export Option

In the example in Figure 6-28, there are a number of subnets received from Router 1 that should be advertised to Router 2. Rather than defining each subnet individually, the administrator can define the 0.0.0.0/0 subnet and mark it with both export route control and the Aggregate export option. This option instructs the fabric that all transit routes should be advertised from this L3 Out. Note that the Aggregate export option does not actually configure route aggregation or summarization; it is simply a method to specify all possible subnets as exported routes. Note also that this option works only when the subnet is 0.0.0.0/0; the option will not be available when you’re configuring any subnets other than 0.0.0.0/0.

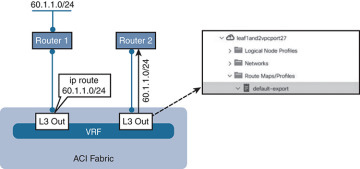

In some scenarios, you may need to export static routes between L3 Out connections, as shown in Figure 6-29.

Figure 6-29 Exporting Static Routes

In the example in Figure 6-29, a static route to 60.1.1.0 is configured on the left L3 Out. If you need to advertise the static route through the right L3 Out, the exact subnet must be configured and marked with export route control. A 0.0.0.0/0 aggregate export subnet will not match the static route.

Finally, note that route export control affects only routes that have been advertised to the Cisco ACI fabric from outside. It has no effect on subnets that exist on internal bridge domains.

Route maps are used on the leaf nodes to control the redistribution from BGP to the L3 Out routing protocol. For example, in the output in Example 6-1, a route map is used for controlling the redistribution between BGP and OSPF.

Example 6-1 Controlling Redistribution with a Route Map

Leaf-101# show ip ospf vrf tenant-1:vrf-1 Routing Process default with ID 6.6.6.6 VRF tenant-1:vrf-1 Stateful High Availability enabled Supports only single TOS(TOS0) routes Supports opaque LSA Table-map using route-map exp-ctx-2818049-deny-external-tag Redistributing External Routes from static route-map exp-ctx-st-2818049 direct route-map exp-ctx-st-2818049 bgp route-map exp-ctx-proto-2818049 eigrp route-map exp-ctx-proto-2818049

Further analysis of the route map shows that prefix lists are used to specify the routes to be exported from the fabric, as demonstrated in Example 6-2.

Example 6-2 Using Prefix Lists to Specify Which Routes to Export

Leaf-101# show route-map exp-ctx-proto-2818049

route-map exp-ctx-proto-2818049, permit, sequence 7801

Match clauses:

ip address prefix-lists: IPv6-deny-all IPv4-proto16389-2818049-exc-ext-inferred-

exportDST

Set clauses:

tag 4294967295

route-map exp-ctx-proto-2818049, permit, sequence 9801

Match clauses:

ip address prefix-lists: IPv6-deny-all IPv4-proto49160-2818049-agg-ext-inferred-

exportDST

Set clauses:

tag 4294967295

Finally, analysis of the prefix list shows the exact routes that were marked as export route control in the L3 Out connection, as demonstrated in Example 6-3.

Example 6-3 Routes Marked as Export Route

Leaf-101# show ip prefix-list IPv4-proto16389-2818049-exc-ext-inferred-exportDST ip prefix-list IPv4-proto16389-2818049-exc-ext-inferred-exportDST: 1 entries seq 1 permit 70.1.1.0/24

Supported Combinations for Transit Routing

Some limitations exist on the supported transit routing combinations through the fabric. In other words, transit routing is not possible between all the available routing protocols. For example, at the time of this writing, transit routing is not supported between two connections if one is running EIGRP and the other is running BGP.

The latest matrix showing supported transit routing combinations is available at the following link:

Loop Prevention in Transit Routing Scenarios

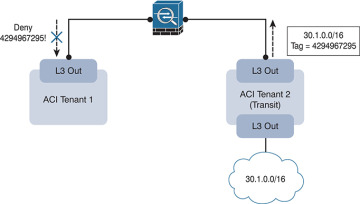

When the Cisco ACI fabric advertises routes to an external routing device using OSPF or EIGRP, all advertised routes are tagged with the number 4294967295 by default. For loop-prevention purposes, the fabric will not accept routes inbound with the 4294967295 tag. This may cause issues in some scenarios where tenants and VRFs are connected together through external routing devices, or in some transit routing scenarios such as the example shown in Figure 6-30.

Figure 6-30 Loop Prevention with Transit Routing

In the example in Figure 6-30, an external route (30.1.0.0/16) is advertised in Cisco ACI Tenant 2, which is acting as a transit route. This route is advertised to the firewall through the second L3 Out, but with a route tag of 4294967295. When this route advertisement reaches Cisco ACI Tenant 1, it is dropped due to the tag.

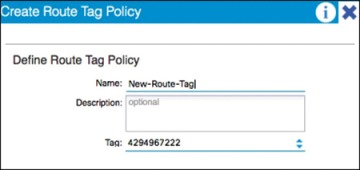

To avoid this situation, the default route tag value should be changed under the tenant VRF, as shown in Figure 6-31.

Figure 6-31 Changing Route Tags

WAN Integration

In Release 2.0 of the Cisco ACI software, a new option for external Layer 3 connectivity is available, known as Layer 3 Ethernet Virtual Private Network over Fabric WAN (for more information, see the document “Cisco ACI Fabric and WAN Integration with Cisco Nexus 7000 Series Switches and Cisco ASR Routers White Paper” at Cisco.com [http://tinyurl.com/ACIFabNex]).

This option uses a single BGP session from the Cisco ACI spine switches to the external WAN device. All tenants are able to share this single connection, which dramatically reduces the number of tenant L3 Out connections required.

Additional benefits of this configuration are that the controller handles all of the fabric-facing WAN configuration per tenant. Also, when this configuration is used with multiple fabrics or multiple pods, host routes will be shared with the external network to facilitate optimal routing of inbound traffic to the correct fabric and resources. The recommended approach is that all WAN integration routers have neighbor relationships with ACI fabrics (spines) at each site.

Figure 6-32 shows Layer 3 EVPN over fabric WAN.

Note that Layer 3 EVPN connectivity differs from regular tenant L3 Out connectivity in that the physical connections are formed from the spine switches rather than leaf nodes. Layer 3 EVPN requires an L3 Out connection to be configured in the Infra tenant. This L3 Out connection will be configured to use BGP and to peer with the external WAN device. The BGP peer will be configured for WAN connectivity under the BGP peer profile, as shown in Figure 6-33.

Figure 6-32 Layer 3 EVPN over Fabric WAN

Figure 6-33 BGP WAN Connectivity Configuration

The L3 Out connection must also be configured with a provider label. Each individual tenant will then be configured with a local L3 Out connection configured with a consumer label, which will match the provider label configured on the Infra L3 Out connection.

Design Recommendations for Multitenant External Layer 3 Connectivity

In a small-to-medium-size environment (up to a few hundred tenants) and in environments where the external routing devices do not support EVPN connectivity, it is acceptable and recommended to use individual L3 Out connections per tenant. This is analogous to the use of VRF-lite in traditional environments, where each routed connection is trunked as a separate VLAN and subinterface on the physical links from the leaf nodes to the external device.

In a larger environment, the L3 Out per-tenant approach may not scale to the required level. For example, the total number of L3 Out connections for a given Cisco ACI fabric is 400 at press time. In that case, the recommended approach is to use Layer 3 EVPN over fabric WAN to achieve multitenant Layer 3 connectivity from the fabric. This approach will provide a greater scale and is also preferred over the shared L3 Out approach described earlier in this document. Finally, if your organization will be leveraging ACI in multiple fabric or multiple pod topologies for disaster recovery or active/active scenarios, where path optimization is a concern, Layer 3 EVPN over fabric WAN should be a consideration.

Quality of Service

The ACI fabric will transport a multitude of applications and data. The applications your data center supports will no doubt have different levels of service assigned to these applications based on their criticality to the business. Data center fabrics must provide secure, predictable, measurable, and sometimes guaranteed services. Achieving the required Quality of Service (QoS) by effectively managing the priority of your organization’s applications on the fabric is important when deploying a successful end-to-end business solution. Thus, QoS is the set of techniques to manage data center fabric resources.

As with normal QoS, QoS within ACI deals with classes and markings to place traffic into these classes. Each QoS class represents a Class of Service, and is equivalent to “qos-group” in traditional NXOS. Each Class of Service maps to a Queue or set of Queues in Hardware. Each Class of Service can be configured with various options, including a scheduling policy (Weighted Round Robin or Strict Priority, WRR being default), min buffer (guaranteed buffer), and/or a max buffer (static or dynamic, dynamic being default).

These classes are configured at a system level, and are therefore called system classes. At the system level, there are six supported classes, including three user-defined classes and three reserved classes which are not configurable by the user.

User-Defined Classes

As mentioned above, there is a maximum of three user-defined classes within ACI. The three classes are:

Level1

Level2

Level3 (always enabled & equivalent to best-effort class in traditional NXOS)

All of the QoS classes are configured for all ports in the fabric. This includes both the host-facing ports and the fabric or uplink ports. There is no per-port configuration of QoS classes in ACI as with some of the other Cisco technologies. Only one of these user-defined classes can be set as a strict priority class at any time.

Reserved Classes

As mentioned above, there are three reserved classes that are not configurable by the user:

Insieme Fabric Controller (IFC) Class — All APIC-originated or -destined traffic is classified into this class. This class has the following characteristics. It is a strict priority class. When Flowlet prioritization mode is enabled, prioritized packets use this class.

Control Class (Supervisor Class) — This class has the following characteristics. It is a strict priority class. All supervisor-generated traffic is classified into this class. All control traffic, such as protocol packets, uses this class.

SPAN Class — All SPAN and ERSPAN traffic is classified into this class. This class has the following characteristics. It is a best effort class. This class uses a congestion algorithm of Deficit Weighted Round Robin (DWRR) and least possible weight parameters. This class can be starved.

Classification and Marking

When Quality of Service is being used in ACI to classify packets, packets are classified using layer 2 Dot1p Policy, layer 3 Differentiated Services Code Point (DSCP) policy, or Contracts. The policies used to configure and apply DSCP and Dot1p are configured at the EPG level using a “Custom QoS Policy”. In the “Custom QoS Policy” a range of DSCP or Dot1p values will be used to create a rule and map these values to a DSCP target. If both Dot1p and DSCP policy are configured within an EPG, the DSCP policy takes precedence. The order in which one QoS policy takes precedence over another is based on the following list starting with the highest priority and ending with the lowest:

Zone rule

EPG-based DSCP policy

EPG-based Dot1p Policy

EPG-based default qos-grp

A second example of this hierarchy would be if a packet matches both a zone rule with a QoS action and an EPG-based policy. The zone rule action will take presidence. If there is no QoS policy configured for an EPG, all traffic will fall into the default QoS group (qos-grp).

QoS Configuration in ACI

Once you understand classification and marking, configuration of QoS in ACI is straightforward. Within the APIC GUI, there are three main steps to configure your EPG to use QoS:

Configure Global QoS Class parameters — The configuration performed here allows administrators to set the properties for the individual class.

Configure Custom QoS Policy within the EPG (if necessary) — This configuration lets administrators specify what traffic to look for and what to do with that traffic.

Assign QoS Class and/or Custom QoS Class (if applicable) to your EPG.

Contracts can also be used to classify and mark traffic between EPGs. For instance, your organization may have the requirement to have traffic marked specifically with DSCP values within the ACI fabric so these markings are seen at egress of the ACI fabric, allowing appropriate treatment on data center edge devices. The high-level steps to configure QoS marking using contracts are as follows:

ACI Global QoS policies should be enabled.

Create filters — Any TCP/UDP port can be used in the filter for later classification in the contract subject. The filters that are defined will allow for separate marking and classification of traffic based on traffic type. For example, SSH traffic can be assigned higher priority than other traffic. Two filters would have to be defined, one matching SSH, and one matching all IP traffic.

Define a Contract to be provided and consumed.

Add subjects to the contract.

Specify the directionality (bidirectional or one-way) of the subject and filters

Add filters to the subject

Assign a QoS class

Assign a target DSCP

Repeat as necessary for additional subjects and filters.

Traffic matching on the filter will now be marked with the specified DSCP value per subject.