Multicast in Multitenant Data Centers

Chapter 3 discusses the ability of networks using MPLS VPNs to provide multicast over the VPN overlay. These VPNs allow service providers to provide private IP service to customers over a single Layer 3 network. Chapter 4, “Multicast in Data Center Environments,” also examines the ability of Cisco data center networks to provide multicast services to and from data center resources. A multitenant data center combines these two concepts, creating multiple customer overlays on a single topology. This allows service providers that offer infrastructure as a service (IaaS) to reduce the cost of implementation and operation of the underlying data center network.

In a multitenant data center, each customer overlay network is referred to as a tenant. The tenant concept is flexible, meaning that a single customer may have multiple tenant overlays. If you are familiar with Cisco Application Centric Infrastructure (ACI), discussed in Chapter 4, you will be familiar with this concept. If properly designed, IaaS providers can offer multicast as part of the service of each overlay network in a multitenant data center.

To understand multitenant multicast deployments, this section first briefly reviews how tenant overlays are implemented. The primary design model in this discussion uses segmentation and policy elements to segregate customers. An example of this type of design is defined in Cisco’s Virtualized Multi-Tenant Data Center (VMDC) architecture. VMDC outlines how to construct and segment each tenant virtual network. Cisco’s ACI is another example. A quick review of the design elements of VMDC and ACI helps lay the foundation for understanding multitenant multicast.

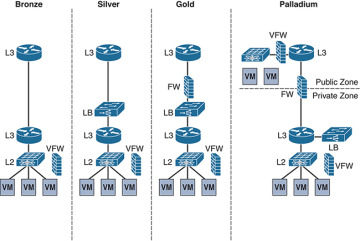

VMDC uses standard Cisco virtual port channel (VPC) or Virtual Extensible LAN (VXLAN) networking for building the underlay infrastructure. It then breaks a tenant overlay into reproducible building blocks that can be adjusted or enhanced to fit the service level requirements of each customer. These blocks consist of segmentation and policy elements that can be virtualized within the underlay. For example, a Silver-level service, as defined by VMDC, incorporates virtual firewall (VFW) and load balancing (LB) services and segmentation through the use of VRF instances, VLANs, firewall contexts, and Layer 3 routing policies. Figure 5-13 shows the service levels possible in a VMDC network, with the virtualized segmentation and policy elements used in each service level.

Figure 5-13 VMDC Tenant Service Levels

Most data center designs, even private single-tenant data centers, further segment compute and application resources that reside on physical and virtual servers into zones. The concept of zones is also depicted in Figure 5-13, on the Palladium service level, which shows a separation between public and private zones. Zones may also be front-end zones (that is, application or user facing) or back-end zones (that is, intersystem and usually non-accessible to outside networks). Firewalls (physical and virtual) are critical to segmenting between zones, especially where public and private zones meet. Finally, public zone segmentation can be extended beyond the data center and into the public network by using VRF instances and MPLS VPNs.

A multitenant data center’s main function is to isolate, segment, and secure individual applications, services, and customers from each other to the extent required. This, in turn, drives the fundamental design elements and requirements when enabling multicast within each customer overlay. Any of Cisco’s multitenant-capable systems are capable of offering multicast. When deploying multicast, a data center architect needs to consider the function and policies of each zone/end point group (EPG) and design segmented multicast domains accordingly.

To put this more simply, the multicast domain, including the placement of the RP, must match the capabilities of each zone. If a zone is designed to offer a public shared service, then a public, shared multicast domain with a shared RP is required to accommodate that service. If the domain is private and restricted to a back-end zone, the domain must be private and restricted by configuration to the switches and routers providing L2 and L3 services to that zone. Such a zone may not require an RP but may need IGMP snooping support on all L2 switches (physical or virtual) deployed in that zone. Finally, if the zone is public, or if it is a front-end zone that communicates with both private and public infrastructure, the design must include proper RP placement and multicast support on all firewalls.

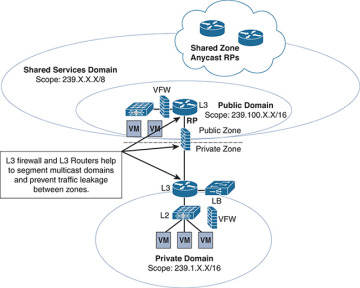

In per-tenant overlays, multiple zones and multicast domains are likely to be required. Hybrid-domain and multidomain multicast designs are appropriate for these networks. Consider the network design in Figure 5-14, which is based on an implementation of multiple multicast domains within a Palladium service level container on a VMDC architecture.

Figure 5-14 Palladium Service with Hybrid Multicast Domain Design

The tenant of Figure 5-14 wishes to use a private multicast domain within the private zone of the network for back-end services. The L2 switch is enabled with IGMP snooping, and the domain is scoped to groups in the 239.1.0.0/16 range. This is a private range and can overlap with any range in the public or shared-public zones, as the domain ends at the inside L3 router.

In addition, the public zone with Layer 3 services offers multicast content on the front end to internal entities outside the DC. A public domain, scoped to 239.100.0.0/16, will suffice. The top L3 router supplies RP services, configured inside a corresponding tenant VRF.

Finally, a shared services domain is offered to other datacenter tenants. The shared public zone has a global multicast scope of 239.0.0.0/8. The tenant uses the L3 FW and L3 routers to carve out any services needed in the shared zone and segments them from the public front-end zone. The overlapping scope of the shared services zone needs to be managed by the configuration of the RP mapping on the public domain RP such that it is mapped as RP for any 239.100.0.0 groups and the shared service Anycast RPs are automatically mapped to all other groups. Additional segmentation and firewall rule sets are applied on the public L3 router and VFW to further secure each multicast domain, based on the rules of the zones in which they are configured.

Best practice to achieve this level of domain scoping is to use PIM-SM throughout the multitenant DC. There may be issues running PIM-SSM if there are overlapping domains in the model. When scoping domains for multitenancy, select one of the four following models:

Use a single RP for all domains with a global scope for each data center

Use local scoping per multitenant zone with a single RP for each domain (may not require firewall multicast transport)

Use a global scope for each DC with one RP and local scoping within multitenant domains and one RP for all domains and zones (requires firewall transport)

A hybrid between per-DC and per-tenant designs, using firewalls and L3 routers to segment domains

Remember that the resources shown in Figure 5-14 could be virtual or physical. Any multitenant design that offers per-tenant multicast services may need to support multiple domain deployment models, depending on the customer service level offerings. For example, the architect needs to deploy hardware and software routers that provide RPs and multicast group mappings on a per-tenant basis. The virtual and physical firewalls need to not only be capable of forwarding IP Multicast traffic but also need multicast-specific policy features, such as access control lists (ACLs), that support multicast. Newer software-defined networking (SDN) technologies, such as Cisco’s ACI, help ease the burden of segmentation in per-tenant multicast designs.

ACI Multitenant Multicast

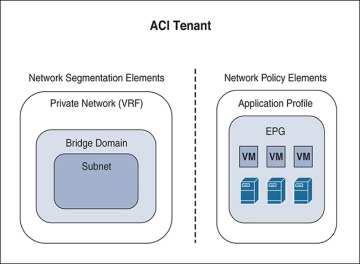

Cisco ACI enhances the segmentation capabilities of multitenancy. ACI, as you learned in Chapter 4, is an SDN data center technology that allows administrators to deconstruct the segmentation and policy elements of a typical multitenant implementation and deploy them as an overlay to an automated Layer 2/Layer 3 underlay based on VXLAN. All principal policy elements in ACI, including VRF instances, bridge domains (BDs), endpoint groups (EPGs), and subnets, are containerized within a hierarchical policy container called a tenant. Consequently, multitenancy is an essential foundation for ACI networks. Figure 5-15 shows the relationship between these overlay policy elements.

Figure 5-15 ACI Overlay Policy Elements

An EPG is conceptually very similar to a zone in the VMDC architecture. Some EPGs do not allow any inter-EPG communication. Others may be private to the tenant but allow inter-EPG routing. Still others may be public facing through the use of a Layer 3 Out policy. Segmentation between zones is provided natively by the whitelist firewall nature of port assignments within an EPG. Without specific policy—namely contracts—inter-EPG communication is denied by default. EPGs can then align to private and public zones and can be designated as front-end or back-end zones by the policies and contracts applied to the application profile in which the EPG resides.

When using non-routed L3 multicast within a zone, the BD that is related to the EPG natively floods multicasts across the BD, much as a switched VLAN behaves. Remember from Chapter 4 that the default VRF is enabled with IGMP snooping by default, and all BDs flood the multicast packets on the appropriate ports with members. Link-local multicast, group range 224.0.0.X, is flooded to all ports in the BD GIPo (Group IP outer), along with one of the FTAG (forwarding tag) trees.

ACI segmentation and policy elements can be configured to support multicast routing, as covered in Chapter 4. For east–west traffic, border leafs act as either the FHR or LHR when PIM is configured on the L3 Out or VRF instance. Border leafs and spines cannot currently act as RPs. Thus, the real question to answer for ACI multitenancy is the same as for VMDC architectures: What is the scope of the domain? The answer to this question drives the placement of the RP.

Because the segmentation in ACI is cleaner than with standard segmentation techniques, architects can use a single RP model for an entire tenant. Any domains are secured by switching VRF instances on or off from multicast or by applying specific multicast contracts at the EPG level. Firewall transport of L3 multicast may not be a concern in these environments. A data center–wide RP domain for shared services can also be used with ACI, as long as the RP is outside the fabric as well. Segmenting between a shared public zone and a tenant public or private zone using different domains and RPs is achieved with VRF instances on the outside L3 router that correspond to internal ACI VRF instances. EPG contracts further secure each domain and prevent message leakage, especially if EPG contracts encompass integrated L3 firewall policies.