Multicast and Software-Defined Networking

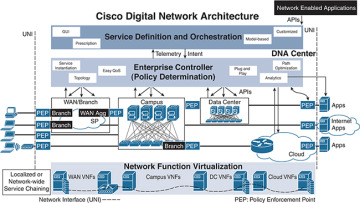

Cisco Digital Network Architecture (DNA) includes three key fabrics from an infrastructure standpoint that need to be understood to comprehend the functioning of multicast. As shown in Figure 5-16, the fabrics defined are SD-Access for campus, ACI for software-defined data center (SD-DC), and software-defined WAN (SD-WAN). The principles of Cisco DNA provide a transformational shift in building and managing campus network, data center, and WAN fabrics in a faster, easier way and with improved business efficiency.

Figure 5-16 Cisco DNA

The salient features to achieve this transition are as follows:

End-to-end segmentation

Simple, automated workflow design

Intelligent network fabric

This chapter has already reviewed SD-DC with ACI and the implication of multicast in DC multitenancy. Chapter 4 explores VXLAN and its design implications with multicast. SD-WAN uses the principles of Layer 3 VPNs for segmentation.

The hub replication for the overlay solution must be considered while selecting the SD-WAN location, which is tied to Dynamic Multipoint VPN (DMVPN) or a Cisco Viptela-based solution. A Cisco Viptela SD-WAN–based solution provides options for the designer to select the headend multicast replication node, which includes the following options:

The replication headend for each individual multicast group

The replication headend aligned to each VPN (assuming that the deployment is segmented)

Splitting the multicast VPN replication reduces the load from the headend routers and helps in localization of traffic based on regional transit points in a global enterprise network.

Within SD-WAN technology are two Cisco solutions: Intelligent WAN (IWAN) and Viptela. There are plans to converge these two SD-WAN solutions with unified hardware and feature support.

In terms of multicast, the following are the key considerations to keep in mind for SD-DC and SD-WAN:

PIM mode needs to be supported in the fabric.

Replication and convergence are required within the fabric.

For simplicity, it is best to keep the RP outside the fabric. (Note that in some fabrics, it is recommended, based on availability of feature support, to keep the multicast RP outside the fabric.)

As of this writing, SD-Access is the fabric most recently added to the Cisco DNA portfolio. This fabric covers the enterprise campus to provide the following functions:

Helps ensure policy consistency

Provides faster service enablement

Significantly improves issue resolution times and reduces operational expenses

SDA includes the following elements:

Identity-based segmentation on the network allows various security domains to coexist on the same network infrastructure while keeping their policies for segmentation intact.

Security is provided by Cisco TrustSec infrastructure (Security Group Tags [SGTs], Security Group Access Control Lists [SGACLs]) and Cisco segmentation capabilities (Cisco Locator/ID Separation Protocol [LISP], Virtual Extensible LAN [VXLAN], and Virtual Route Forwarding [VRF]). Cisco DNA Center aligns all these functions to solutions and abstracts them from users.

Identity context for users and devices, including authentication, posture validation, and device profiling, is provided by the Cisco Identity Services Engine (ISE).

Assurance visibility helps operations with proactive and predictive data to help provide health to the fabric.

The Network Data Platform (NDP) efficiently categorizes and correlates vast amounts of data into business intelligence and actionable insights.

Complete network visibility is provided through simple management of a LAN, WLAN, and WAN as a single entity for simplified provisioning, consistent policy, and management across wired and wireless networks.

Cisco DNA Center software provides single-pane-of-glass network control.

The technical details of data forwarding in the fabric are based on control and data plane elements. It is important to understand that the control plane for the SDA fabric is based on LISP, and LISP forwarding is based on an explicit request. The data plane is established for source and destination communication and uses VXLAN encapsulation. The policy engine for SDA is based on Cisco TrustSec. (The details of the operation of SDA are beyond the scope of this chapter.)

Chapter 4 explains VXLAN and its interaction with multicast. This chapter reviews details about multicast with LISP.

Traditional routing and host addressing models use a single namespace for a host IP address, and this address is sent to every edge layer node for building the protocol control. A very visible and detrimental effect of this single namespace is manifested with the rapid growth of the Internet routing table as a consequence of multihoming, traffic engineering, non-aggregable address allocation, and business events such as mergers and acquisitions: More and more memory is required to handle routing table size, and the requirement to solve end-host mobility with address/security attributes has resulted in the investment of alternative solutions to the traditional control plane model. LISP identifies the end host based on two attributes: host and location address. The control plane build is based on explicit pull, similar to that of Domain Name System (DNS). The use of LISP provides the following advantages over traditional routing protocols:

Multi-address family support: LISP supports IPv4 and IPv6. LISP is added into an IPv6 transition or coexistence strategy to simplify the initial rollout of IPv6 by taking advantage of the LISP mechanisms to encapsulate IPv6 host packets within IPv4 headers (or IPv4 host packets within IPv6 headers).

Virtualization support: Virtualization/multi-tenancy support provides the capability to segment traffic that is mapped to VRF instances while exiting the LISP domain.

Host mobility: VM mobility support provides location flexibility for IP endpoints in the enterprise domain and across the WAN. The location of an asset is masked by the location identification or routing locator (RLOC). When the packet reaches the location, the endpoint identifier (EID) is used to forward the packet to the end host.

Site-based policy control without using BGP: Simplified traffic engineering capabilities provide control and management of traffic entering and exiting a specific location.

LISP addresses, known as EIDs, provide identity to the end host, and RLOCs provide the location where the host resides. Splitting EID and RLOC functions yields several advantages, including improved routing system scalability and improved multihoming efficiency and ingress traffic engineering.

The following is a list of LISP router functions for LISP egress tunnel routers (ETRs) and ingress tunnel routers (ITRs):

Devices are generally referred to as xTR when ETR and ITR functions are combined on a single device.

ETR publishes EID-to-RLOC mappings for the site-to-map server and responds to map-request messages.

ETRs and ITRs de-encapsulate and deliver LISP-encapsulated packets to a local end host within a site.

During operation, an ETR sends periodic map-register messages to all its configured map servers. These messages contain all the EID-to-RLOC entries for the EID-numbered networks that are connected to the ETR’s site.

An ITR is responsible for finding EID-to-RLOC mappings for all traffic destined for LISP-capable sites. When a site sends a packet, the ITR receives a packet destined for an EID (IPv4 address in this case); it looks for the EID-to-RLOC mapping in its cache. If the ITR finds a match, it encapsulates the packet inside a LISP header, with one of its RLOCs as the IP source address and one of the RLOCs from the mapping cache entry as the IP destination. If the ITR does not have a match, then the LISP request is sent to the mapping resolver and server.

LISP Map Resolver (MR)/Map Server (MS)

Both map resolvers (MR) and map servers (MS) connect to the LISP topology. The function of the LISP MR is to accept encapsulated map-request messages from ITRs and then de-encapsulate those messages. In many cases, the MR and MS are on the same device. Once the message is de-encapsulated, the MS is responsible for matching the ETR’s RLOC is authoritative for the requested EIDs.

An MS maintains the aggregated distributed LISP mapping database; it accepts the original request from the ETR that provides the site RLOC with EID mapping connected to the site. The MS/MR functionality is similar to that of a DNS server.

LISP PETRs/PITRs

A LISP proxy egress tunnel router (PETR) implements ETR functions for non-LISP sites. It sends traffic to non-LISP sites (which are not part of the LISP domain). A PETR is called a border node in SDA terminology.

A LISP proxy ingress tunnel router (PITR) implements mapping database lookups used for ITR and LISP encapsulation functions on behalf of non-LISP-capable sites that need to communicate with the LISP-capable site.

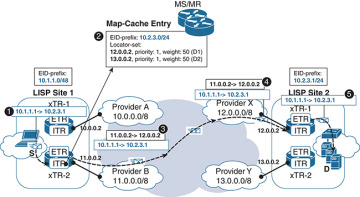

Now that you know the basic components of a LISP domain, let’s review the simple unicast packet flow in a LISP domain, which will help you visualize the function of each node described. Figure 5-17 shows this flow, which proceeds as follows:

Figure 5-17 Basic LISP Packet Flow

Step 1. Source 10.1.1.1/24 sends a packet to 10.2.3.1. The FHR is the local xTR, and 10.2.3.1 is not in the IP routing table.

Step 2. The xTR checks its LISP map cache to verify whether the RLOC location for 10.2.3.1 (the previous communication) is cached. If not, it sends a packet to the MS/MR to get the RLOC resolution for 10.2.3.1. Note that the weight attribute advertised by the ETR of LISP site 2 to MS can be changed to influence ingress routing into the site. In this case, it is equal (50).

Step 3. When the RLOC information is received from MS/MR, the xTR from LISP site 1 encapsulates the packet with the LISP header, using the destination IP address of the RLOC IPs. This directs the packet to LISP site 2.

Step 4. At LISP site 2, the xTR de-encapsulates the packet from the LISP header and also updates the LISP map cache with 10.1.1.0/24.

Step 5. The IP packet (10.1.1.1 to 10.2.3.1) is forwarded out the appropriate interface (which could be in a VRF) to the destination.

LISP and Multicast

The LISP multicast feature introduces support for carrying multicast traffic over a LISP overlay. This support currently allows for unicast transport of multicast traffic with headend replication at the root ITR site.

The implementation of LISP multicast includes the following:

At the time of this writing, LISP multicast supports only IPv4 EIDs or IPv4 RLOCs.

LISP supports only PIM-SM and PIM-SSM at this time.

LISP multicast does not support group-to-RP mapping distribution mechanisms, Auto-RP, or Bootstrap Router (BSR). Only Static-RP configuration is supported at the time of this writing.

LISP multicast does not support LISP VM mobility deployment at the time of this writing.

In an SDA environment, it is recommended to deploy the RP outside the fabric.

Example 5-1 is a LISP configuration example.

Example 5-1 LISP Configuration Example

xTR Config ip multicast-routing ! interface LISP0 ip pim sparse-mode ! interface e1/0 ip address 10.1.0.2 255.255.255.0 ip pim sparse-mode ! router lisp database-mapping 192.168.1.0/24 10.2.0.1 priority 1 weight 100 ipv4 itr map-resolver 10.140.0.14 ipv4 itr ipv4 etr map-server 10.140.0.14 key password123 ipv4 etr exit ! ! Routing protocol config <..> ! ip pim rp-address 10.1.1.1 MR/MS Config ip multicast-routing ! interface e3/0 ip address 10.140.0.14 255.255.255.0 ip pim sparse-mode ! ! router lisp site Site-ALAB authentication-key password123 eid-prefix 192.168.0.0/24 exit ! site Site-B authentication-key password123 eid-prefix 192.168.1.0/24 exit ! ipv4 map-server ipv4 map-resolver exit ! Routing protocol config <..> ! ip pim rp-address 10.1.1.1

In this configuration, the MS/MR is in the data plane path for multicast sources and receivers.

For SDA, the configuration for multicast based on the latest version of DNA Center is automated from the GUI. You do not need to configure interface or PIM modes using the CLI. This configuration is provided just to have an understanding of what is under the hood for multicast configuration using the LISP control plane. For the data plane, you should consider the VXLAN details covered in Chapter 3, and with DNA Center, no CLI configuration is needed for the SDA access data plane.