Actions

The essence of Workload Optimizer is action. Many infrastructure tools promise visibility, and some even provide some insights on top of that visibility. Workload Optimizer is designed to go further and act, in real time, to continuously drive the environment toward the desired state. Actions run the gamut from placement and scaling actions for workloads and storage to infrastructure start/stop/provision/decommission actions to public cloud purchase recommendations and many more.

Workload scaling and placement actions are often the most common and have the most impact. For VMs, Workload Optimizer leverages the underlying hypervisor to scale up or down resource capacity (for example, memory and CPU), reservations, and limits, as well as move VMs and datastores to nodes or clusters that can more optimally support their needs. Similarly, in Kubernetes environments, Workload Optimizer offers actions to right-size container vCPU and vMem requests and limits, move pods to different nodes to free up resources or avoid congestion, and so on. Keep in mind that the list of supported actions and their ability to be executed or automated via Workload Optimizer varies widely by target type and updates frequently. A current detailed list of actions and their execution support status via Workload Optimizer can be found in the Workload Optimizer Target Configuration Guide (http://cs.co/9006zF6Bi).

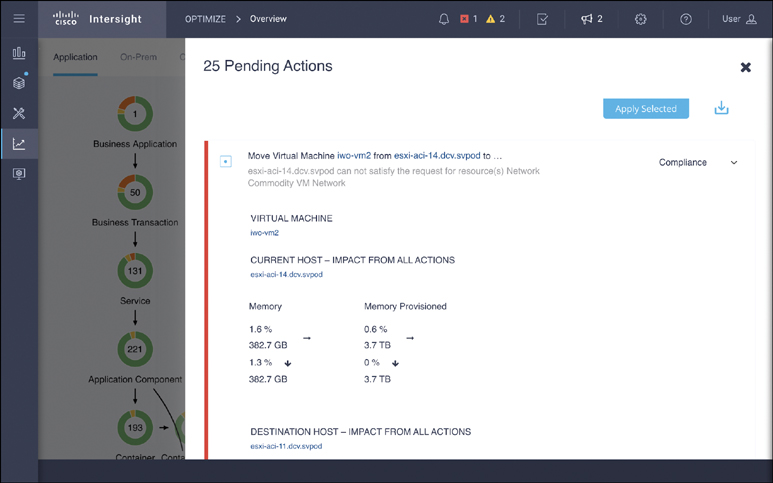

All actions follow an opt-in model; Workload Optimizer never takes an action unless given explicit permissions to do so, either via direct user input or through a custom policy. You can view a list of current actions via the Pending Actions dashboard widget in the supply chain view, via the Actions tab after clicking on a component in the supply chain, or in various scoped views and custom dashboards. Figure 9-6 shows an example of a specific move action.

Figure 9-6 Executing actions