Cisco Container Platform

Setting up, deploying, and managing multiple containers for multiple micro-sized services gets tedious—and difficult to manage across multiple public and private clouds. IT Ops has wound up doing much of this extra work, which makes it difficult for them to stay on top of the countless other tasks they’re already charged with performing. If containers are going to truly be useful at scale, we have to find a way to make them easier to manage.

The following are the requirements in managing container environments:

The ability to easily manage multiple clusters

Simple installation and maintenance

Networking and security consistency

Seamless application deployment, both on the premises and in public clouds

Persistent storage

That’s where Cisco Container Platform (CCP) comes in, which is a fully curated, lightweight container management platform for production-grade environments, powered by Kubernetes, and delivered with Cisco enterprise-class support. It reduces the complexity of configuring, deploying, securing, scaling, and managing containers via automation, coupled with Cisco’s best practices for security and networking. CCP is built with an open architecture using open source components, so you’re not locked in to any single vendor. It works across both on-premises and public cloud environments. And because it’s optimized with Cisco HyperFlex, this preconfigured, integrated solution sets up in minutes.

The following are the benefits of CCP:

Reduced risk: CCP is a full-stack solution built and tested on Cisco HyperFlex and ACI Networking, with Cisco providing automated updates and enterprise-class support for the entire stack. CCP is built to handle production workloads.

Greater efficiency: CCP provides your IT Ops team with a turnkey, preconfigured solution that automates repetitive tasks and removes pressure on them to update people, processes, and skill sets in-house. It provides developers with flexibility and speed to be innovative and respond to market requirements more quickly.

Remarkable flexibility: CCP gives you choices when it comes to deployment—from hyperconverged infrastructure to VMs and bare metal. Also, because it’s based on open source components, you’re free from vendor lock-in.

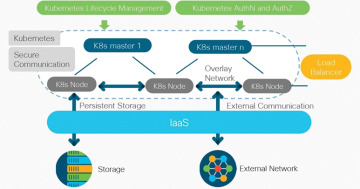

Figure 5-7 provides a holistic overview of CCP.

Figure 5-7 Holistic overview of CCP

Cisco Container Platform ushers all of the tangible benefits of container orchestration into the technology domain of the enterprise. Based on upstream Kubernetes, CCP presents a UI for self-service deployment and management of container clusters. These clusters consume private cloud resources based on established authentication profiles, which can be bound to existing RBAC models. The advantage to disparate organizational teams is the flexibility to consistently and efficiently deploy clusters into IaaS resources, a feat not easily accomplished and scaled when utilizing script-based frameworks. Teams can discriminately manage their cluster resources, including responding to conditions requiring a scale-out or scale-in event, without fear of disrupting another team’s assets. CCP boasts an innately open architecture composed of well-established open source components—a framework embraced by DevOps teams aiming their innovation toward cloud-neutral work streams.

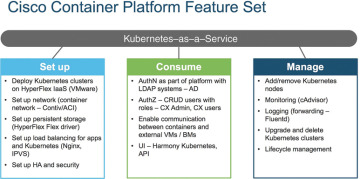

CCP deploys easily into an existing infrastructure, whether it be of a virtual or bare-metal nature, to become the turnkey container management platform in the enterprise. CCP incorporates ubiquitous monitoring and policy-based security and provides essential services such as load balancing and logging. The platform can provide applications an extension into network management, application performance monitoring, analytics, and logging. CCP offers an API layer that is compatible with Google Cloud Platform and Google Kubernetes Engine, so transitioning applications potentially from the private cloud to the public cloud fits perfectly into orchestration schemes. The case could be made for containerized workloads residing in the private cloud on CCP to consume services brokered by Google Cloud Platform, and vice versa. For environments with a Cisco Application Centric Infrastructure (ACI), Contiv, a CCP component, will secure the containers in a logical policy-based context. Those environments with Cisco HyperFlex (HX) can leverage the inherent benefits provided by HX storage and provide persistent volumes to the containers in the form of FlexVolumes. CCP normalizes the operational experience of managing a Kubernetes environment by providing a curated production quality solution integrated with best-of-breed open source projects. Figure 5-8 illustrates the CCP feature set.

Figure 5-8 CCP feature set

The following are some CCP use cases:

Simple GUI-driven menu system to deploy clusters: You don’t have to know the technical details of Kubernetes to deploy a cluster. Just fill in the questions, and CCP will do the work.

The ability to deploy Kubernetes clusters in air-gapped sites: CCP tenant images contain all the necessary binaries and don’t need Internet access to function.

Choice of networking solutions: Use Cisco’s ACI plug-in, an industry standard Calico network, or if scaling is your priority, choose Contiv with VPP. All work seamlessly with CCP.

Automated monthly updates: Bug fixes, feature enhancements, and CVE remedies are pushed automatically every month—not only for Kubernetes, but also for the underlying operating system (OS).

Built-in visibility and monitoring: CCP lets you see what’s going on inside clusters to stay on top of usage patterns and address potential problems before they negatively impact the business.

Preconfigured persistent volume storage: Dynamic provisioning using HyperFlex storage as the default. No additional drivers need to be installed. Just set it and forget it.

Deploy EKS clusters using CCP control plane: CCP allows you to use a single pane of glass for deploying on-premises and Amazon clusters, plus it leverages Amazon Authentication for both.

Pre-integrated Istio: It’s ready to deploy and use without additional administration.

Cisco Container Platform Architecture Overview

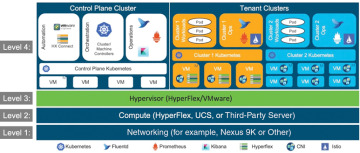

At the bottom of the stack is Level 1, the Networking layer, which can consist of Nexus switches, Application Policy Infrastructure Controllers (APICs), and Fabric Interconnects (FIs).

Level 2 is the Compute layer, which consists of HyperFlex, UCS, or third-party servers that provide virtualized compute resources through VMware and distributed storage resources.

Level 3 is the Hypervisor layer, which is implemented using HyperFlex or VMware.

Level 4 consists of the CCP control plane and data plane (or tenant clusters). In Figure 5-9, the left side shows the CCP control plane, which runs on four control-plane VMs, and the right side shows the tenant clusters. These tenant clusters are preconfigured to support persistent volumes using the vSphere Cloud Provider and Container Storage Interface (CSI) plug-in. Figure 5-9 provides an overview of the CCP architecture.

Figure 5-9 Container Platform Architecture Overview

Components of Cisco Container Platform

Table 5-2 lists the components of CCP.

Table 5-2 Components of CCP

Function |

Component |

|---|---|

Operating System |

Ubuntu |

Orchestration |

Kubernetes |

IaaS |

vSphere |

Infrastructure |

HyperFlex, UCS |

Container Network Interface (CNI) |

ACI, Contiv, Calico |

SDN |

ACI |

Container Storage |

HyperFlex Container Storage Interface (CSI) plug-in |

Load Balancing |

NGINX, Envoy |

Service Mesh |

Istio, Envoy |

Monitoring |

Prometheus, Grafana |

Logging |

Elasticsearch, Fluentd, and Kibana (EFK) stack |

Container Runtime |

Docker CE |

Sample Deployment Topology

This section describes a sample deployment topology of the CCP and illustrates the network topology requirements at a conceptual level.

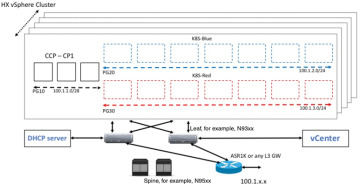

In this case, it is expected that the vSphere-based cluster is set up, provisioned, and fully functional for virtualization and virtual machine (VM) functionality before any installation of CCP. You can refer to the standard VMware documentation for details on vSphere installation. Figure 5-10 provides an example of a vSphere cluster on which CCP is to be deployed.

Figure 5-10 vSphere cluster on which CCP is to be deployed

Once the vSphere cluster is ready to provision VMs, the admin then provisions one or more VMware port groups (for example, PG10, PG20, and PG30 in the figure) on which virtual machines will subsequently be provisioned as container cluster nodes. Basic L2 switching with VMware vswitch functionality can be used to implement these port groups. IP subnets should be set aside for use on these port groups, and the VLANs used to implement these port groups should be terminated on an external L3 gateway (such as the ASR1K shown in the figure). The control-plane cluster and tenant-plane Kubernetes clusters of CCP can then be provisioned on these port groups.

All provisioned Kubernetes clusters may choose to use a single shared port group, or separate port groups may be provisioned (one per Kubernetes cluster), depending on the isolation needs of the deployment. Layer 3 network isolation may be used between these different port groups as long as the following conditions are met:

There is L3 IP address connectivity among the port group that is used for the control-plane cluster and the tenant cluster port groups

The IP address of the vCenter server is accessible from the control-plane cluster

A DHCP server is provisioned for assigning IP addresses to the installer and upgrade VMs, and it must be accessible from the control-plane port group cluster of the cluster

The simplest functional topology would be to use a single shared port group for all clusters with a single IP subnet to be used to assign IP addresses for all container cluster VMs. This IP subnet can be used to assign one IP per cluster VM and up to four virtual IP addresses per Kubernetes cluster, but would not be used to assign individual Kubernetes pod IP addresses. Hence, a reasonable capacity planning estimate for the size of this IP subnet is as follows:

(The expected total number of container cluster VMs across all clusters) + 3 × (the total number of expected Kubernetes clusters)

Administering Clusters on vSphere

You can create, upgrade, modify, or delete vSphere on-premises Kubernetes clusters using the CCP web interface. CCP supports v2 and v3 clusters on vSphere. The v2 clusters use a single master node for their control plane, whereas the v3 clusters can use one or three master nodes for their control plane. The multimaster approach of v3 clusters is the preferred cluster type, as this approach ensures high availability for the control plane. The following steps show you how to administer clusters on vSphere:

Step 1. In the left pane, click Clusters and then click the vSphere tab.

Step 2. Click NEW CLUSTER.

Step 3. In the BASIC INFORMATION screen:

From the INFRASTRUCTURE PROVIDER drop-down list, choose the provider related to your Kubernetes cluster.

For more information, see Adding vSphere Provider Profile.

In the KUBERNETES CLUSTER NAME field, enter a name for your Kubernetes tenant cluster.

In the DESCRIPTION field, enter a description for your cluster.

In the KUBERNETES VERSION drop-down list, choose the version of Kubernetes that you want to use for creating the cluster.

If you are using ACI, specify the ACI profile.

For more information, see Adding ACI Profile.

Click NEXT.

Step 4. In the PROVIDER SETTINGS screen:

From the DATA CENTER drop-down list, choose the data center that you want to use.

From the CLUSTERS drop-down list, choose a cluster.

From the DATASTORE drop-down list, choose a datastore.

From the VM TEMPLATE drop-down list, choose a VM template.

From the NETWORK drop-down list, choose a network.

For v2 clusters that use HyperFlex systems:

The selected network must have access to the HypexFlex Connect server to support HyperFlex Storage Provisioners.

For HyperFlex Local Network, select k8-priv-iscsivm-network to enable HyperFlex Storage Provisioners.

From the RESOURCE POOL drop-down list, choose a resource pool.

Click NEXT.

Step 5. In the NODE CONFIGURATION screen:

From the GPU TYPE drop-down list, choose a GPU type.

For v3 clusters, under MASTER, choose the number of master nodes as well as their VCPU and memory configurations.

Under WORKER, choose the number of worker nodes as well as their VCPU and memory configurations.

In the SSH USER field, enter the SSH username.

In the SSH KEY field, enter the SSH public key that you want to use for creating the cluster.

In the ROUTABLE CIDR field, enter the IP addresses for the pod subnet in the CIDR notation.

From the SUBNET drop-down list, choose the subnet that you want to use for this cluster.

In the POD CIDR field, enter the IP addresses for the pod subnet in the CIDR notation.

In the DOCKER HTTP PROXY field, enter a proxy for the Docker.

In the DOCKER HTTPS PROXY field, enter an HTTPS proxy for the Docker.

In the DOCKER BRIDGE IP field, enter a valid CIDR to override the default Docker bridge.

Under DOCKER NO PROXY, click ADD NO PROXY and then specify a comma-separated list of hosts that you want to exclude from proxying.

In the VM USERNAME field, enter the VM username that you want to use as the login for the VM.

Under NTP POOLS, click ADD POOL to add a pool.

Under NTP SERVERS, click ADD SERVER to add an NTP server.

Under ROOT CA REGISTRIES, click ADD REGISTRY to add a root CA certificate to allow tenant clusters to securely connect to additional services.

Under INSECURE REGISTRIES, click ADD REGISTRY to add Docker registries created with unsigned certificates.

For v2 clusters, under ISTIO, use the toggle button to enable or disable Istio.

Click NEXT.

Step 6. For v2 clusters, to integrate Harbor with CCP:

In the Harbor Registry screen, click the toggle button to enable Harbor.

In the PASSWORD field, enter a password for the Harbor server administrator.

In the REGISTRY field, enter the size of the registry in gigabits.

Click NEXT.

Step 7. In the Summary screen, verify the configuration and then click FINISH.

Administering Amazon EKS Clusters Using CCP Control Plane

Before you begin, make sure you have done the following:

Added your Amazon provider profile.

Added the required AMI files to your account.

Created an AWS IAM role for the CCP usage to create AWS EKS clusters.

Here is the procedure for administering Amazon EKS clusters using the CCP control plane:

Step 1. In the left pane, click Clusters and then click the AWS tab.

Step 2. Click NEW CLUSTER.

Step 3. In the Basic Information screen, enter the following information:

From the INFRASTUCTURE PROVIDER drop-down list, choose the provider related to the appropriate Amazon account.

From the AWS REGION drop-down list, choose an appropriate AWS region.

In the KUBERNETES CLUSTER NAME field, enter a name for your cluster.

Click NEXT.

Step 4. In the Node Configuration screen, specify the following information:

From the INSTANCE TYPE drop-down list, choose an instance type for your cluster.

From the MACHINE IMAGE drop-down list, choose an appropriate CCP Amazon Machine Image (AMI) file.

In the WORKER COUNT field, enter an appropriate number of worker nodes.

In the SSH PUBLIC KEY drop-down field, choose an appropriate authentication key.

This field is optional. It is needed if you want to ssh to the worker nodes for troubleshooting purposes. Ensure that you use the Ed25519 or ECDSA format for the public key.

In the IAM ACCESS ROLE ARN field, enter the Amazon Resource Name (ARN) information.

Click NEXT.

Step 5. In the VPC Configuration screen, specify the following information:

In the SUBNET CIDR field, enter a value of the overall subnet CIDR for your cluster.

In the PUBLIC SUBNET CIDR field, enter values for your cluster on separate lines.

In the PRIVATE SUBNET CIDR field, enter values for your cluster on separate lines.

Step 6. In the Summary screen, review the cluster information and then click FINISH.

Cluster creation can take up to 20 minutes. You can monitor the cluster creation status on the Clusters screen.

Licensing and Updates

You need to configure Cisco Smart Software Licensing on the Cisco Smart Software Manager (Cisco SSM) to easily procure, deploy, and manage licenses for your CCP instance. The number of licenses required depends on the number of VMs necessary for your deployment scenario.

Cisco SSM enables you to manage your Cisco Smart Software Licenses from one centralized website. With Cisco SSM, you can organize and view your licenses in groups called “virtual accounts.” You can also use Cisco SSM to transfer the licenses between virtual accounts, as needed.

You can access Cisco SSM from the Cisco Software Central home page, under the Smart Licensing area. CCP is initially available for a 90-day evaluation period, after which you need to register the product.

Connected Model

In a connected deployment model, the license usage information is directly sent over the Internet or through an HTTP proxy server to Cisco SSM.

For a higher degree of security, you can opt to use a partially connected deployment model, where the license usage information is sent from CCP to a locally installed VM-based satellite server (Cisco SSM satellite). Cisco SSM satellite synchronizes with Cisco SSM on a daily basis.

Registering CCP Using a Registration Token

You need to register your CCP instance with Cisco SSM or Cisco SSM satellite before the 90-day evaluation period expires. The following is the procedure for registering CCP using a registration token, and Figure 5-11 shows the workflow for this procedure.

Figure 5-11 Registering CCP using a registration token

Step 1. Perform these steps on Cisco SSM or Cisco SSM satellite to generate a registration token:

Go to Inventory > Choose Your Virtual Account > General and then click New Token.

If you want to enable higher levels of encryption for the products registered using the registration token, check the Allow Export-Controlled functionality on the products registered with this token check box.

Download or copy the token.

Step 2. Perform these steps in the CCP web interface to register the registration token and complete the license registration process:

In the left pane, click Licensing.

In the license notification, click Register.

The Smart Software Licensing Product Registration dialog box appears.

In the Product Instance Registration Token field, enter, copy and paste, or upload the registration token that you generated in Step 1.

Click REGISTER to complete the registration process.

Upgrading Cisco Container Platform

Upgrading CCP and upgrading tenant clusters are independent operations. You must upgrade CCP to allow tenant clusters to upgrade. Specifically, tenant clusters cannot be upgraded to a higher version than the control plane. For example, if the control plane is at version 1.10, the tenant cluster cannot be upgraded to the 1.11 version.

Upgrading CCP is a three-step process:

You can update the size of a single IP address pool during an upgrade. However, we recommend that you plan ahead for the free IP address requirement by ensuring that the free IP addresses are available in the control-plane cluster prior to the upgrade.

If you are upgrading from a CCP version, you must do the following:

Ensure that at least five IP addresses are available (3.1.x or earlier).

Ensure that at least three IP addresses are available (3.2 or later).

Upgrade the CCP tenant base VM.

Deploy/upgrade the VM.

Upgrade the CCP control plane.

To get the latest step-by-step upgrade procedure, you can refer to the CCP upgrade guide.