Adding Services to the Cloud

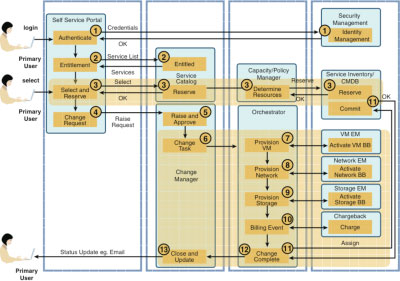

Figure 11-6 illustrates a generic provisioning and activating process. The major management components required to support this are as follows:

- A self-service portal that allows tenants to create, modify, and delete services and provides views or service assurance and usage views

- A security manager that manages all tenant credentials

- A service catalogue that stores all commercial and technical service definitions

- A change manager that orchestrates all tasks, manual and automatic, and acts as a system or record for all tenant requests that can be audited

- A capacity and/or policy manager responsible for managing infrastructure capacity and access policies

- An orchestrator that orchestrates technical actions and can provide simple capacity and policy decisions

- A CMDB/service inventory repository that stores asset and service data

- A set of element managers that communicate directly with the concrete infrastructure elements

Figure 11-6 Generic Orchestration

The orchestration steps illustrated in Figure 11-6 are as follows:

|

Step 1. |

The tenant connects to the portal and authenticates against the security manager, which can also provide group policy information used in Step 2. |

|

Step 2. |

A list of entitled services is retrieved from the service catalogue and displayed in the portal, along with any existing service data and the assurance and usage views. |

|

Step 3. |

The provisioning process begins with the tenant selecting the required service and ends with the technical building blocks that support the service being reserved in the service inventory or CMDB. Depending on the management components deployed, the validation that the service can be fulfilled based on the constraints and capabilities provided by the POD will be done in a separate capacity or policy manager or can be performed by the orchestrator. Note that the orchestrator/capacity manager is not necessarily making detailed placement decisions for the activation of the service. For example, on which blade in a vSphere/ESX cluster to place a resource, these will typically be made in the element manager that maintains detailed, real-time usage data. |

|

Step 4. |

The portal will create a change request to manage the delivery of the request or order. |

|

Step 5. |

The change will be approved. This could simply be an automatic approval, or it can be passed into some form of change process. |

|

Step 6. |

The activation process begins. A change is decomposed into at least one change task that is passed to the orchestrator. |

|

Steps 7, 8, 9. |

These processes are being managed by the orchestrator to instantiate the concrete building blocks based on the service definition. The orchestrator will communicate with the various element managers. For example, in the case of a Vblock, the orchestrator would communicate with VMware vCenter to create, clone, modify, or delete a virtual machine. Up until this point, the data regarding the service has been abstracted away from the specific implementation. At this point, the orchestrator will extract the relevant data and pass it to the element manager using its specific APIs. |

|

Step 10. |

A billing event is created that will be used to charge for fixed items such as adding more vRAM or another vCPU. |

|

Step 11. |

The orchestration has completed successfully, so all resources are committed in the service inventory and the change task closed. This flow represents the "happy day" scenario in which all process steps are completed successfully. A more detailed process would have rollback and compensations steps documented as well, but this is beyond the scope of this chapter. |

|

Step 12. |

The flow of control is passed back into the change manager, and this marks the end of the activation process. This might start another task or might close the overall change request if only one task is present. |

|

Step 13. |

A notification is passed back directly to the tenant, indicating that the request has been completed. Alternatively, this notification could be sent to the portal if the portal maintains request data. |

As discussed previously, there might be several provisioning steps, so you might need to iterate through this process several times.

Provisioning the Infrastructure Model

We now look at the steps needed to provision the tenant model shown in Figure 11-4, this assumes that the actual physical building blocks have been racked, stacked, cabled and configured in the datacenter already:

- The cloud provider infrastructure administrator (CPIA) will log in to the self-service portal and be presented with a set of services that he is entitled to see, one of which is On-board a New POD. The CPIA will select this service; complete all the details required for this service, such as management IP addresses, constraints, and capabilities; and submit the request. The reservation step is skipped here because this service is creating new resources.

- As this is a significant change, this service will go through an approval process that will see infrastructure owners and cloud teams review and approve the request.

- After it is approved, as the infrastructure already exists, a single change task will be created to update the CMDB, and this will be passed to the orchestrator.

- The orchestrator has little to do but simply call the CMDB/Service Inventory and create the appropriate configuration items (CI) and their relationships. Optionally, the orchestrator can also update service assurance components to ensure that the new resources are being managed, but in most cases, this has already been done as part of the physical deployment process.

- A success notification is generated up the stack, and the request is shown as complete in the portal.

Provisioning the Organization and VDC

The same process used by the CPIA is followed by the cloud provider customer administrator (CPAD), but a few differences exist:

- The CPAD will be entitled to a different set of services than the CPIA.

- The approval process will now be more commercial/financial in nature, checking that all the agreed-upon terms and conditions are in place and that credit checks have been done.

- Orchestration activities will manage interactions with the CMDB to create the organization and VDC CIs to add user accounts to the identity repository so that the tenant can log in to the portal, and to add VDC resource limits to the capacity/policy manager and set up any branding required for the tenant in the portal.

Creating the Network Container

The same process is followed by the tenant network designer, but a few differences exist:

- The network designer (ND) logs in to the portal using the credentials set up by the CPIA and is presented with a set of services orientated around creating, modifying, and deleting the network container. The network designer could be a consumer or a provider role depending on the complexity of the network design.

- The ND selects the virtual network building blocks he requires and submits the request. As this is a real-time system, the resources are reserved so that they are assigned (but not committed) to this request. The capacity manager will make sure that sufficient capacity exists in the infrastructure and that the organization has contracted enough capacity before reserving any resources.

- The approval process is skipped here if the organization has contracted enough capacity and there is enough infrastructure capacity; then the change will be preapproved.

- Orchestration activities will manage interactions with the element managers responsible for automating and activating the configuration of the virtual network elements in a specific POD and generating billing events so that the tenant can be billed on what he has consumed.

- A success notification is generated up the stack, and the request is shown as complete in the portal. The resources that were reserved are now committed in the service inventory and/or CMDB.

Creating the Application

The same process used by the network designer is followed by the tenant application designer, but a few differences exist:

- The cloud consumer application designer (CCAD) logs on to the portal using the credentials set up by the CPIA and is presented with a set of services orientated around creating, modifying, and deleting the application container.

- The CCAD selects the application building blocks he requires and submits the request. The network building blocks created by the network designer will also be presented in the portal to allow the application designer to specify which network he wants the application elements to connect to. As this is a real-time system, the resources are reserved.

- Orchestration activities will manage interactions with the element managers responsible for automating and activating the configuration of the virtual machines, deploying software images, and generating billing events so that the tenant can be billed on what he has consumed.

- A success notification is generated up the stack, and the request is shown as complete in the portal. The resources that were reserved are now committed in the service inventory and/or CMDB.

Workflow Design

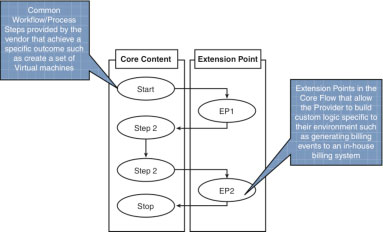

The workflow covered in the preceding sections will vary. Some will be based on out-of-the-box content provided by an orchestration/automation vendor such as Cisco and some will be completely bespoke; most workflow will be a combination. It is important to balance flexibility and supportability. On the one hand, you don’t want to build a standardized, fixed set of workflows that cannot be customized or changed; on the other hand, you don’t want to build technical workflows that are completely bespoke and unsupportable. One potential solution is to use the concept of moments and extension points to allow flexible workflows but at the same time introduce a level of standardization that promotes an easier support and upgrade path. Figure 11-7 illustrates these concepts.

Figure 11-7 Workflow Design

The core content is comprised of workflow moments; the moment concept is applied to points in time of the technical orchestration workflow. Some example moments are as follows:

- Trigger and decomposition: This moment is where the flow is triggered and decomposes standard payload items to workflow attributes, for example, the action variable, which is currently used to determine the child workflow to trigger but might also be required to be persisted in the workflow for billing updates and so on.

- Workflow enrichment and resource management: This moment is where data is extracted from systems using standard adapters and any resource management or ingress checking is performed.

- Orchestration: This is the overarching orchestration flow.

- Standard actions: These are the standard automation action sequences provided by the vendor.

- Standard notifications and updates: This step will update any inventory repositories (CMDBs) provided with the solution, such as the cloud portal, change manager, and so on.

The core consent can be extended to support bespoke functions using extension points. The concept here is that all processes would contain a call or dummy process element that can be triggered after the core task had completed to handle customized actions without requiring changes to the core workflow. An example set of extension points are as follows:

- Trigger and decomposition: This extension point is where custom service data received from the calling system/portal is decomposed into variables used in the rest of the workflow. This will allow designers to quickly add service options/data in the requesting system and handle this data in a standard manner without changing the core decomposition logic.

- OSS enrichment and resource management: This extension point is where custom service data is requested through custom WS* calls or other nonstandard methods and added to the workflow runtime data. This will allow designers to integrate with clients’ specific systems without changing the core enrichment logic.

- Actions: This extension point is where custom actions are performed using WS* calls or other nonstandard methods. This will allow designers to integrate with clients’ specific automation sequences without changing the core automation logic.

- Notifications: This extension point is where custom notifications are performed using WS* calls or other nonstandard methods. This will allow designers to integrate with clients’ specific automation systems without changing the core notification logic.