Creation and Placement Strategies

The previous sections discussed that any form of activation would require a placement decision; these decisions are typically made on the following grounds:

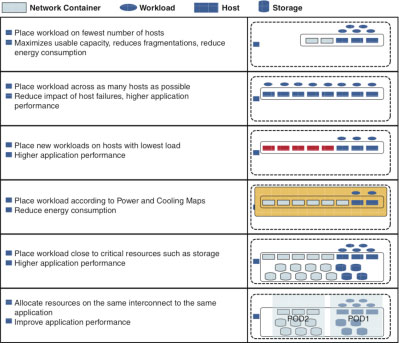

- Maximizing resource usage: The provider wants to ensure that it gets the maximum workload density across its infrastructure. This means optimizing CAPEX (the amount of money spent on purchasing equipment).

- Maximizing resilience: The provider chooses to spread workloads across multiple hosts to minimize failures; this typically means hosts are typically underutilized.

- Maximize usage: This is similar to maximizing resource usage, but this requires a point-in-time decision to be made about what host is least loaded now and in the time span of the workload. Think of Amazon's Spot service.

- Maximize facilities usage: The provider wants to place workloads based on facilities usage and to reduce energy consumption.

- Maximize application performance: Place all building blocks in the same domain or data center interconnect.

Figure 11-8 illustrates these concepts.

Figure 11-8 Generic Resource Allocation Strategies

Certain placement strategies are simpler to implement than others. For example, placing workloads based on current load can be done quite simply in a VMware environment because the API supports it if Distributed Resource Scheduling (DRS) is enabled. The orchestrator can query vCenter to determine DRS placement options or simply create a VM in a cluster and let DRS decide placement. Maximizing facilities usage is substantially more difficult based on the following needs:

- A system that understands all this usage

- An interface to query this system

- The creation of an adapter that queries this system in the orchestrator and the logic associated with this query

A single placement strategy can be adopted, or the provider can adopt multiple strategies with prioritization. Typically a provider of public clouds will look to optimize its resource usage and reduce its energy consumption ahead of application performance unless the consumer chooses to pay for better application performance, in which case this will affect the placement or migration of the service to a POD that supports the placement requirement. A public cloud provider might prioritize resilience and application performance over resource optimization.

Choosing which POD supports a particular placement strategy will mean combining dynamic data such as vCenter DRS placement options with the capabilities and constraints that are modeled when the POD was on-boarded and making a decision based on all this data. The choice can be complicated as the underlying physical relationship within the POD needs to be understood. For example, assume that a provider has purchased two Vblocks and connected them to the same aggregation switches; each Vblock has 64 blades installed, four ESX clusters in total. Two clusters are modeled with high oversubscription capability and two with lower oversubscription capability. A tenant requests an HTTP load balancer design pattern that needs the web servers to run on Gold tier storage but on a low-cost (highly oversubscribed) ESX cluster.

If the orchestrator/capacity manager simply looks at the service inventory/CMDB, it will determine that two clusters can support low cost through the oversubscription_type=high capability modeled in the inventory. The orchestrator/capacity manager can also identify which ESX cluster of the two can support the workloads required by consulting DRS recommendations, but this simply identifies which compute building blocks will support the workload. As this design pattern requires a Gold storage tier, the service inventory must be queried to understand which storage is attached to that ESX cluster and whether it has enough capacity to host the required number of .vmdk files. In addition, as the design pattern requires a session load balancer and neither Vblock supports a network-based session load balancer, the compute and associated storage must also be able to support an additional VM-based load balancer. If there was a third POD that did contain a load balancer but was connected to different compute and storage resources, would this POD make a better choice for the service? As more infrastructure capabilities are modeled, it is likely that the need for a true policy manager will evolve as the capabilities of the orchestrator/capacity manager are overhauled.