John Chambers, the executive chairman and former CEO of Cisco Systems, once stated, "There are two types of companies: those who have been hacked, and those who don't yet know they have been hacked." [1] This statement may seem unrealistic until you look at recent research focused on cyber breaches and vulnerabilities. For example, the Verizon 2015 Data Breach Investigation Report stated [2] that 99% of exploited vulnerabilities were compromised more than a year after the associated Common Vulnerabilities and Exposures (CVE) reference [3] was published.

The defenses are available to stop malicious users from breaching systems, but those defenses are not being put in place. Why not? The answer includes many reasons, such as human or system error, the vast range of devices and systems that are vulnerable, the required time to maintain defenses, changes in responsibilities for providing security, and so on. Even if your systems are up to date now, that situation can change immediately after you validate your patches, making the goal of being 100% secure an impossible moving target. In summary, though defenders must strive to be 100% secure, that number is impossible to achieve and maintain, while attackers need just one mistake to get past those defenses.

Looking back at John Chambers' famous quote, what he's asking all of us to do is to assume that we've been breached: How should we provide security differently than we're operating today? A good starting point is evaluating your current incident-response plan. Many employees aren't even sure what that plan is, or they lack the capabilities to respond properly to a breach. Best practice is to have the ability to Scope, Contain, and Remediate a security breach from a network and file viewpoint. Let's walk through how a typical breach operates and examine concepts to detect and prevent the attack. We'll start with how a typical attack could penetrate a network.

Attack Stage 1: Phishing for Victims

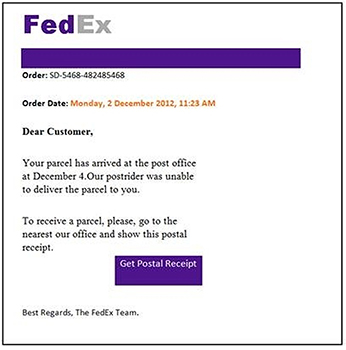

What keeps many chief security officers up at night is the idea that their organization has been breached. Seeing incidents that were prevented may raise some concerns; however, the real nightmare is what is not seen and yet crawling around the network. An example of how this could happen: A user accesses a website that's designed to deliver malware, and that connection provides a backdoor to the internal network. In this example, the attack process starts with getting someone to access the malicious website. The approach could be through email or websites that entice the recipient to click a link. Common examples include fake FedEx email (see Figure 1), website hosting data that lures people with fantasy football stats, links on social media sites for "thank you" cards, and so on.

Figure 1 Example of fake FedEx email for a phishing attack.

Attack Stage 2: Identifying a Vulnerability

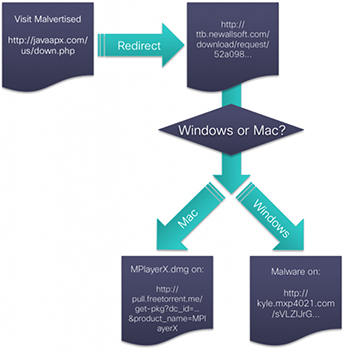

Once the victim accesses the malicious website, the victim's system is evaluated for vulnerabilities. The goal is to find a weakness that can be exploited so something unwanted can be delivered to the organization's internal network. From a defense point of view, this potential attack is where most administrators focus, using host and network security products to identify vulnerabilities and stop attempts to exploit weaknesses. Common systems to accomplish this goal include antivirus and intrusion-prevention tools, as well as different types of vulnerability scanners. However, as I stated earlier, if just one weakness is missed, or the security tools are unaware of an attack method, the attack will be successful at breaching the system. Figure 2 shows the step-by-step process of a successful breach: The user visits a malicious website, that website evaluates the user's system for weakness based on the operating system, and the attack delivers a dropper based on a specific exploit.

Figure 2 Example workflow of an attack against Windows and Mac systems.

Attack Stage 3: Delivering the Payload

Once a system is exploited, typically some type of remote access tool (RAT) is delivered so an attacker can access the network remotely. From there, the attack can spread RATs across the network to establish a foothold on the target. Even if one RAT is remediated, the attacker now has many other doorways to access the network.

Best practice for preventing this level of compromise is having a breach-detection strategy that identifies unusual behavior and unknown threats. My team finds that many customers don't have breach-detection capabilities and therefore can't tell if somebody has breached their network defenses. This situation explains why we hear about companies having been compromised for years without knowing about the breach.

Figure 3 presents a summary example of the attack flow I just covered: A system accesses a website, the website exploits a weakness, a dropper is delivered to provide a backdoor, and the attacker can accomplish the goal of breaching the network.

Figure 3 Workflow showing steps involved in common network breaches.

Detecting the Breach

As we've noted, many organizations don't know that they've already been breached. The industry average for detecting a breach is 200 days (more than six months), which gives attackers plenty of time to accomplish their goals. The focus of a breach-detection strategy is not to prevent an attack but instead to shorten this breach time to something more reasonable, such as 40 hours, giving the victim enough time to stop a breach before any real damage is done.

Best practice for breach detection is to leverage network and file visibility for unusual behavior. The most common methods to monitor for network breaches are using NetFlow and capturing packets. Both approaches target traffic patterns, and both techniques have pros and cons. Capturing packets requires taps at specific spots, limiting visibility; this method also requires a large amount of storage for the data being analyzed, and that data must be reviewed. NetFlow requires a lot less storage, can be enabled network-wide on existing equipment, and can be processed for simple analysis of the relevant data. On the other hand, NetFlow results don't contain the actual data, so this approach doesn't provide as much forensic detail as when capturing packets.

Files should be monitored for unusual behavior so unknown threats can be captured. This design targets the "bad stuff" delivered when systems are exploited. Antivirus and other signature-based security products focus on known threats, so if something is unknown, they won't identify the breach. Best practice is looking for both known and unknown threats when examining files, as shown in Figure 4.

Figure 4 Best practice to identify a threat is looking for known and unknown threats.

Example of Defending Against a Breach

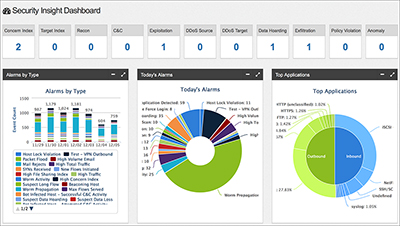

Imagine that you're the administrator of a large firm that has just been hit with a breach similar to those covered earlier. One of your users went to a malicious website, that user's system was exploited, and a RAT was dropped, providing a backdoor for a malicious party to infiltrate your internal network. Your antivirus, firewalls, and IPS are not triggering any alarms, yet your NetFlow tool alerts you of a lot of unusual behavior from a specific user that has now been seen on a few systems following a timeline. Figure 5 shows some alarms in a NetFlow solution that identify unusual behavior an administrator should investigate.

Figure 5 Cisco/Lancope's StealthWatch dashboard showing potential threats.

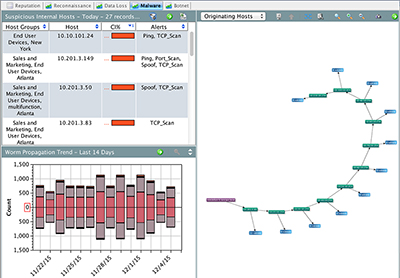

The purpose of using NetFlow for security is to monitor for unusual behavior that other security technologies fail to notice. This method works by establishing a baseline of the network, meaning that you know what's normal, and watching for anomalies. Specific alarms may not indicate a threat if they don't constitute an attack signature; however, security-based NetFlow tools stack various concerns, making it more likely that you'll identify an incident that's actually a threat. In this example, behavior such as systems starting to scan the network for other systems, unauthorized VPN tunnels being established back to the attacker, data leaving the network in an unusual manner, and so on would combine to trigger a high-level breach event. Figure 6 shows the spreading of a threat based on many factors purely seen with NetFlow.

Figure 6 StealthWatch showing a worm, based on NetFlow behavior.

The other way to monitor for a breach is by validating all files across the network. The concept is that attackers need a tool to breach your network, which comes in the form of some type of file. These files are typically the RATs referred to earlier in the attack workflow, but can have many other unwanted behaviors such as encrypting your hard drive and later holding it for ransom. In this example, files changing names (polymorphism), changing size with junk data (encoding), modifying access rights (privilege escalation), and so on should all indicate that the associated file is a possible threat.

Identifying a threat on an endpoint is important; however, in order to properly Scope, Contain, and Remediate the threat, you must be able to identify all systems that have the infection. This is a problem with many endpoint and file-based security solutions that don't integrate with other technologies on the network. If one endpoint alerts administrators that a potential malicious file is installed, the same infection could be on other systems. The key is knowing which systems are infected, how the malicious files got into the network, and what can be done to avoid a future breach.

Best practice for remediating a breach is having the network and file breach-detection solutions working together, along with other security technologies such as perimeter defenses. With this approach, if an endpoint alarms administrators of a potential breach, an associated fingerprint can be run across the network to identify other infected systems, along with tracing back to when the fingerprint was first seen on the network.

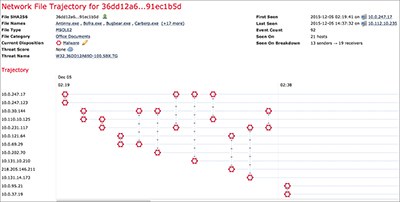

Figure 7 showcases the blend of network and file breach detection by using a hash of an identified infected file and mapping it across the network all the way back to the initial system that was compromised. As a next step, the administrators could audit the first system that was infected, identifying what weakness was exploited, in order to avoid future compromises.

Figure 7 Example of mapping an infected file across the network using Cisco FirePOWER.

In this example, a user's system was exploited when accessing a malicious website. The RAT file that was delivered to the system would act outside of normal files, such as scanning for other systems to infect and establishing an unauthorized tunnel to the attacker sitting outside the network. That file would also attempt to spread internally, creating other access points for the remote attacker. The file breach-detection technology should alarm to this behavior, remove the file, and alert administrators.

The administrators should follow up the remediation of the infected system by running a scan for the hash of the recently identified threat across the network, looking to identify any other systems that have been infected. The system with the earliest time of infection should be researched with inside-perimeter security products such as an application-layer firewall or content filter to identify the websites visited around the time of infection, as well as with other assessments such as a vulnerability scan. The administrators could attempt to prevent a future breach by updating all signature-based security products with the hash of the recently identified threat, blacklisting any suspicious websites associated with the system that was infected, and patching any vulnerabilities identified while auditing the infected systems.

Final Thoughts

The main point of this article is to explain why you can't be 100% secure, and therefore you need to build a security strategy around incident response. The odds are that something will be vulnerable and exposed to an attacker that will breach your network. The attack example used in this article is just one of the many threats you should expect to encounter. Best practice for preventing a breach is looking across the network for unusual behavior as well as validating all files. This approach gives you the ability to flag anything that doesn't match normal behavior, so you can shorten the time of detecting and remediating a breach of your network.

Traditional security solutions such as firewalls, antivirus, network segmentation, intrusion detection, and so on are all crucial to securing your network. The key is to go one step further by having tools that detect breaches, or you may never know about a breach that is currently extracting data from your network.

References

[1] John Chambers, "What does the Internet of Everything mean for security?" World Economic Forum, January 21, 2015.

[2] The Common Vulnerabilities and Exposures (CVS) reference is compiled and published by the Mitre Corporation.

[3] Verizon 2015 Data Breach Investigation Report, p. 15.