Life of a Packet on a CSR 1000V: The Data Plane

Before we get into the details of packet flow for the CSR 1000V, it is important to understand the drivers that make it possible for the CSR VM to talk to physical devices and other software modules. These drivers act as software glue, relaying a packet to and from the physical wire. We have touched on the different hypervisors that enable the CSR VM to work on various x86 architectures. Here we discuss packet flow to and from a CSR VM.

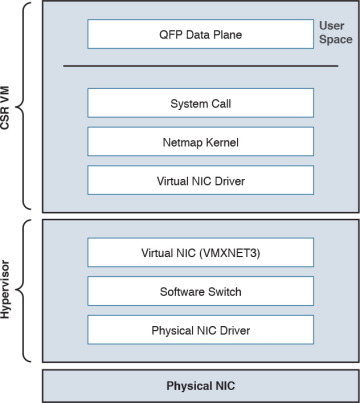

Figure 4-5 shows the virtualization layers of a CSR 1000V VM.

Figure 4-5 CSR VM Layers

From Figure 4-5, you can see that the hypervisor presents a virtual NIC to its guest VM by using a driver. This driver can either be a para-virtualized driver (for example, VMXNET3) or a real/emulated driver (for example, e1000). Para-virtualized drivers are native to hypervisors and perform much better than emulated drivers such as the e1000. Hypervisors support emulated drivers because they are required for full virtualization. Recall from Chapter 1, “Introduction to Cloud,” that in full virtualization, guest operating systems do not require any support from the hypervisor kernel and run as though on real hardware. Therefore, support for emulated drivers is required. However, the performance of emulated drivers is much lower than that of para-virtualized drivers. The CSR VM supports para-virtualized drivers only.

Netmap I/O

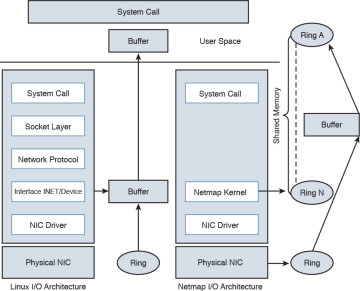

Netmap is an open-source I/O infrastructure package that enables the CSR VM to get rid of the multiple software layers in the traditional Linux networking stack I/O model. This results in faster I/O. Understanding the Netmap I/O model will help you better understand packet flow to and from a CSR VM. This section provides an overview of the Netmap I/O model and compares it with a Linux I/O model. It is important to understand the I/O model before drilling down to packet flow.

Netmap is designed to strip down software layers and get the frame from the wire to the data plane process in user space as quickly as possible. Netmap achieves this through the four building blocks of its I/O architecture:

Thin I/O stack—Netmap bypasses the Linux networking stack to reduce overhead. Since the CSR data plane runs in the user space, when it wants an I/O architecture to deliver receive (Rx) frames from the NIC to the user space (data plane) and transmit (Tx) frames from the data plane to the NIC, it leverages Netmap’s thin I/O stack.

Zero copy—Netmap maps all memory from rings (pool of memory buffers) in a way that makes them directly accessible in the data plane (user space). Hence there is no copy involved in getting the information to the user space. Preventing a copy operation saves a lot of time in an I/O model, and Netmap’s zero-copy model is very effective at increasing performance compared to a traditional Linux I/O model.

Simple synchronization—The synchronization mechanism in Netmap is extremely simple. When you have the Rx packets on the ring, Netmap updates the count of new frames on the ring and wakes up threads that are sleeping to process the frames. On the Tx side, the write cursor is updated as a signal to announce the arrival of new frames on the Tx ring. Netmap then flushes the Tx ring.

Minimal ring manipulation—In the Netmap I/O architecture, the ring is sized such that the producer accesses the ring from the head end, while the consumer accesses it from the tail. (Producer and consumer are terms associated with the process that tries to initiate the I/O process [producer] and a process that gets affected in trying to serve the producer [consumer].) The access to the ring is allowed simultaneously for the producer and the consumer. In a regular Linux I/O scenario, you would have to wait for the host to fill up the ring with pointers to buffers. When the ring is being serviced, Linux detaches the buffers from the ring and then replenishes the ring with new pointers.

An overview of the layers of software involved in building a CSR 1000V VM is illustrated previously in Figure 4-5. Figure 4-6 compares the Linux I/O model with the Netmap I/O model.

Figure 4-6 Linux Versus Netmap I/O Comparison

Packet Flow

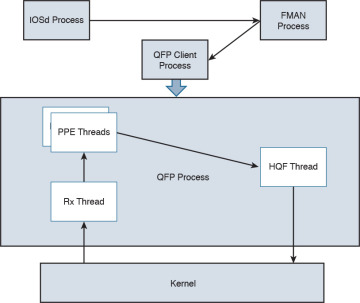

There are three major data plane components:

Rx thread

Tx thread

HQF (Hierarchical Queuing Framework) thread

All these components run on a single process within the QFP process umbrella. Multiple PPE threads serve requests within this QFP process. The following sections discuss the flow.

Device Initialization Flow

The following events take place to get the NIC (or vNIC, in a para-virtualized environment) ready for operation:

During boot-up, the platform code within IOSd discovers all Linux network interfaces. The platform code then maps these Linux interfaces—eth0, eth1, and so on—to Gig0, Gig1, and so on. After talking to the kernel, platform code sets up the interface state (up or down), sets the MTU, sets the ring size, and sets the MAC address.

The FMAN process creates the FMAN interfaces and then reaches out to the QFP client process to initialize the data-plane interface.

After the QFP process receives the initialization message from the Client process to create an interface, the QFP process then initializes an interface called micro-interface descriptor block (uIDB) in the data plane.

After the uIDB is created in the QFP process, the FMAN process binds this uIDB to the network interface name.

The component of the data-plane process responsible for interacting with the kernel now has to make sure that the interface created with the QFP process is registered and enabled within the Netmap component of the kernel.

With the new interfaces registered, the Netmap component communicates with the virtual NIC driver to initialize the physical NIC.

The vNIC driver opens the NIC, initiates the rings, and makes the NIC ready for operation.

TX Flow

The following events take place when there is a packet to be transmitted (Tx) by the CSR onto the wire:

The HQF thread detects that there are packets to be sent.

The HQF thread checks congestion on the transmit interface and checks the interface states.

If the transmit interface is not congested, HQF sends the frame. HQF can also wait to accumulate more frames, batch them, and then send them out.

The platform code locates the next available slot in the Tx ring and copies the frame from the source buffer into the Netmap buffer for transmission.

The platform code flushes the Tx ring.

Netmap forwards the flushed frames to the vNIC driver.

The vNIC driver initializes the NIC Tx slots.

The vNIC driver writes onto the Tx registers.

The vNIC driver cleans up the Tx ring of done slots.

The vNIC sends the frame on the wire and generates a notification on completion.

RX Flow

The following events occur whenever a CSR receives a packet to be processed:

The Rx thread (the thread that receives frames from the QFP process) issues a poll system call to wait for the new Rx frames.

When a new frame arrives, the NIC (or vNIC, in this case) accesses the vNIC Rx ring to get a pointer to the next Netmap buffers.

The vNIC puts the frame onto the next Netmap buffers.

The vNIC generates an Rx interrupt.

The Netmap Rx interrupt service routine runs the Rx threads.

The vNIC driver finds the new frame and creates memory buffers for it.

The Rx thread pushes the frame to the PPE thread for processing.

Figure 4-7 illustrates packet flow between different XE processes.

Figure 4-7 Flowchart for Packet Flow

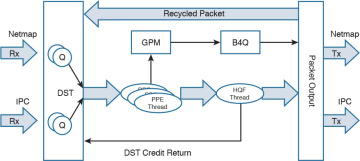

Unicast Traffic Packet Flow

The Tx and Rx flows in Figure 4-7 detail how a packet is transmitted from the NIC (or vNIC, in a para-virtualized driver) to the QFP process. Now we can look at how the QFP process handles the packet after it gets it. The following steps examine a unicast IPv4 packet flow:

The QFP process receives the frame from the Netmap Rx and stores it in Global Packet Memory (GPM).

The Dispatcher copies the packet header from the GPM and looks for free PPE to assign. The packet remains in the GPM while it is being processed by the PPEs.

The Dispatcher assigns a free PPE thread to process the feature on the packet.

PPE threads process the packet and gather the packets. The gather process copies the packets into B4Q memory and sends the HQF thread a notification that there is a new packet in the B4Q memory.

HQF sends the packet by copying it from B4Q into the Netmap Tx ring, and then releases the B4Q buffer.

The Ethernet driver sends the frame and frees the Tx ring once the packet has been sent out.

Multicast IPsec packets are recycled from the HQF thread back to the in/out processing of the PPE threads.

Figure 4-8 illustrates the packet flow in the QFP process.

Figure 4-8 CSR 1000V Packet Flow in the QFP Process