In this chapter, we describe the main use cases where NetDevOps excels. We go over what the use case is, how it is handled in a traditional networking fashion and finish with the NetDevOps approach and its benefits. We also describe common decisions in the adoption of NetDevOps such as tooling choices, skills required, and possible starting points. This chapter finishes with lessons learned from many NetDevOps adoption processes: common challenges and mitigations. In summary, you will learn the following about NetDevOps:

What does it solve

How does it solve it

Possible starting points

Decisions and investments

Common pitfalls and recommendations

Use Cases

In Chapter 1, “Why Do We Need NetDevOps?”, you learned what NetDevOps is, its benefits, and its components. In this section, you will learn what specific use cases you can benefit from by applying NetDevOps practices and tools.

More specifically, this section goes into detail on each individual use case NetDevOps can help you with. Although this is an extensive list, as you can see in Figure 2-1, it is possible that not all use cases are represented here.

FIGURE 2.1 NetDevOps Use Cases Mind Map

The use case deep dives focus on the stages a typical continuous integration/continuous delivery/deployment (CI/CD) pipeline should have rather than on the automation scripts or infrastructure as code (IaC) that performs the actual actions. The reason for this choice is that we consider network automation a well-documented topic that is highly dependent on tool choices and desired automated actions, and we want you to learn the orchestration process behind each use case while keeping this chapter’s stages and practices mostly tool and technology agnostic.

These use cases are focused on the usage perspective, meaning that you can and should have CI/CD pipelines to merge developed code into your source control. However, this is not our focus. The following pipelines are the pipelines you, someone from your team, or an automatic trigger would start when you need to perform an action in your network. Note the pipelines that are triggered when you modify your automation code.

Triggers are a topic we will dive into in Chapter 3, “How to Implement CI/CD Pipelines with Jenkins.” However, for this chapter, it is important to understand that pipelines can be triggered in many different ways: manually by a user, automatically by a change in a code repository, automatically in response to an event (for example, a high % of CPU), and so on. Throughout this chapter, you will see mentions of possible triggers for each use case.

Provisioning

Provisioning, which is often confused with configuring, is the process of setting up the IT infrastructure. Infrastructure in this context can mean many things: virtual machines, containers, virtual network devices, services, and so on. However, when you want to create a virtual local area network (VLAN) and consult the documentation, you will often see “Configuring a VLAN.” That’s because configuring comes after provisioning; when you configure a VLAN, the switch was already provisioned.

In a physical environment, you provision a switch (cabling, racking, and stacking) and then configure it (VLANs, IP addresses, interfaces, and so on). In a virtual environment, you provision a virtual machine (number of CPUs, amount of RAM, or amount of storage) and then configure it (installing a software package, patching a security vulnerability, and so on) or you provision a virtual switch (virtual-to-physical port mappings) in a hypervisor environment and then configure port groups (VM-to-VLAN mappings).

In this book, provisioning refers to creating resources in networking environments. Mostly this happens in cloud environments—not necessarily only in public cloud environments, such as Amazon Web Services, Google Cloud Platform, and Microsoft Azure, which are the more famous options, but in any cloud environment, such as the less popular private clouds like Red Hat OpenStack and VMware environments.

These provisioning actions can take effect in different forms, such as command-line interfaces (CLIs), graphical user interfaces (GUIs), and application programming interfaces (APIs). In our NetDevOps context, and because automation is one of our core components, you will more often see an API as the default choice.

A traditional networking provisioning workflow is usually orchestrated as a series of manual steps executed by one or more individuals. This workflow is achieved either manually, by clicking through the GUI for simpler actions, or manually, by executing a series of automation scripts that make use of the product’s API or CLI.

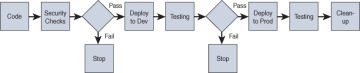

So, what does a typical provisioning NetDevOps pipeline look like and how does it differ from a traditional one? Figure 2-2 shows an example. This pipeline starts by retrieving the required code from a code repository, which is a version control system (VCS). In this repository, you will likely find the automation code to perform the provisioning action, which can be implemented by a variety of different tools (for example, Ansible, Terraform, Python, Unix shell, and so on), but also some specific variables required for this code to run. In concrete terms, this could be a generic Ansible playbook to replace the running-config on an IOS-XE switch and a variable file with the specific running-config to push.

FIGURE 2.2 Provisioning Pipeline

For some automation workflows, there will be no variables file, either because it is a static workflow without any possible variation (for example, a script that saves the running-config to the startup-config in all your network switches) or because the pipeline prompts the user for the required variables at the time of execution. We call these “runtime variables” because they are provided at runtime.

The second stage, labeled “Security Checks,” is a security verification stage. This is often ignored, not because people do not like security, but because it is, in many cases, hard to implement. In this stage, you verify that the combination of automation plus variables does not violate any security policy in a static way (“static” because you do not execute this automation to make this verification). Some tools can make this verification easier (for example, tfsec for Terraform). A common verification for cloud environments is to make sure the firewall rules are not open to the world.

The third stage, “Deploy to Dev,” is also a stage often missing from many production implementations. This is where you execute the automation code against a test/development/staging environment. This stage is meant to be executed against an environment that mirrors your production environment to give you confidence before you possibly make environment-breaking changes. Because of costs, historical reasons, or other factors, as we discuss later in this chapter, this stage is often skipped.

The pipeline’s development deployment stage is followed by a more thorough testing than static analysis. In this stage, you can go as deep as you want with your testing. From the simple “are the resources created with my parameters?” test to actual functional testing of the created resources—anything is possible. This serves as a decision point of either to continue and provision the same resources in your production environment or to stop (in the case of discovered unexpected behaviors).

Assuming you did not abort, it is time for the production deployment stage. In this stage, you execute the same code as you did in the development environment but with a different target—production. This should have the exact same effect, and hopefully you now understand the value of the previous stage and the added assurance you get from having a testing environment.

Although you tested in the staging environment, the following stage involves testing in production. Here, oftentimes you do even a more thorough testing, and you include some end-to-end tests that you could not run in your testing environment. It is common that testing environments are smaller in size and complexity, and because of that some tests can only be run in production. Likewise, load tests (meaning putting a resource under simulated demand) are typically run in this stage.

The last stage is the clean-up stage, and it is optional. In this stage, you often clean up your testing environment (in the case that is virtual). However, if you have a static testing environment that mimics your production environment, you would not delete it in this stage.

Using Terraform to provision a tenant in Cisco Application Centric Infrastructure (ACI) is a concrete implementation of this use case. First, as shown in Example 2-1, you need to have Terraform code to implement a Cisco ACI tenant. With this code in a code repository, you can create a CI/CD pipeline with the previously mentioned stages.

Example 2-1 Source Code for aci_tenant.yml

In the first stage, you check out the code from the code repository. In the second stage, you can use a script to verify whether the tenant’s name complies with your naming standards (for example, whether it uses CamelCase), or you can use tfsec to verify that the security configurations of the tenant are compliant with best practices.

In the third stage, you execute your Terraform code against a test/development/staging Application Policy Infrastructure Controller (APIC) using the Terraform syntax:

$ terraform apply aci_tenant.yml

In the fourth stage, you verify that the new tenant was successfully created and nothing broke. Your ACI fabric should still be working as expected. If that is the case, your fifth stage should apply your Terraform code to your production APIC. You can use environment variables to choose what environment to deploy to in each stage, or you can use other techniques, as you will see in Chapter 6, “How to Build Your Own NetDevOps Architecture.”

In the sixth stage, you verify your production environment after provisioning your new tenant.

Normally, APICs are long-lasting appliances and are not spun up and spun down; instead, they run continuously. Because of that, you would not have the last optional stage to delete your test environment.

Note that error handling was not mentioned in this example of a typical CI/CD provisioning pipeline. If any of the stages fail, the pipeline would stop and human intervention would be required to set it right. There are different ways to handle errors; for example, you could retry the same action or you could roll back. In networking, retrying the same action is typically not advised without first checking the error logs. Because of that, rollbacks are the most common way of handling provisioning errors. Depending on the automation tool used, rollbacks for the most part only remove what was provisioned and therefore are easy to achieve. In Chapter 4, “How to Implement NetDevOps Pipelines with Jenkins,” you will learn how to implement rollbacks in code.

Lastly, provisioning workflows are typically triggered manually by a user or by changes in the configuration variables in a code repository.

Configuration

Configuration, as mentioned, comes after resources are provisioned. This is the most common use case in NetDevOps. As the name implies, this use case consists of configuring resources, and both changes to an already existing configuration or new configurations fall within this use case’s umbrella. Specific examples include configuring a VLAN on a router, configuring a virtual machine with a software package, and configuring a new virtual switch port group in a VMware environment.

In order to configure your target resources, besides the desired configurations, you will need a configuration tool. Many different tools with different features are available, including Ansible, Terraform, Chef, vendor-specific tools, and even programming languages such as Python. In our context of networking, the predominant tool is Ansible. However, choosing a tool is complex and a topic described later in this chapter.

A traditional configuration workflow can be described as a series of steps executed in sequence:

Step 1. Connect to a resource.

Step 2. Verify the current functionality.

Step 3. Optionally retrieve the current configuration and functionality.

Step 4. Optionally compare the current configuration to the desired configuration.

Step 5. Configure the resource with the new configuration.

Step 6. Verify the desired functionality.

These steps are usually manually executed by an operator from a workstation or executed by an operator in an automated fashion. For example, in a networking setup, an operator can either SSH to a device and issue a show command from their workstation (steps 1 and 2) or run a script from their workstation that does the same two steps in an automated fashion. The fully manual approach is more error-prone.

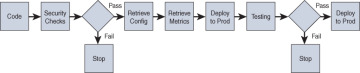

In a NetDevOps fashion, you take the previous automated approach a couple steps further. Figure 2-3 presents a complete NetDevOps configuration pipeline.

FIGURE 2.3 Configuration Pipeline

This pipeline is similar to a provisioning pipeline, but this section focuses on the differences.

One interesting difference is due to the fact that configuration workflows are often configuration changes made to previous configurations. Unlike in the provisioning use case, where you create net new assets, in this scenario you need to take into consideration what was configured before. In the third stage of the pipeline, you do that by retrieving the current resource’s configuration. In this same stage, you can also retrieve information about the current functionality. However, many organizations prefer to separate these stages into two different ones, as shown in Figure 2-3 as the “Retrieve Metrics” stage; this allows them to manage the scripts to gather information independently of each other and achieve a higher level of decoupling.

Imagine you want to configure a new Open Shortest Path First (OSPF) neighbor in a Nexus switch. In the third stage, “Retrieve Config,” you retrieve the running-config section of OSPF and the configuration of the interface where the new neighbor exists. In the fourth stage, you retrieve the show ip ospf neighbors and the show ip route commands’ output.

The information gathered at this stage can be used to derive what configuration changes are needed. For example, if you want to replace a Simple Network Management Protocol (SNMP) key, it is different from configuring a new SNMP key on top of another existing one. This would result in having two SNMP keys instead of a single changed one. This type of logic needs to be implemented by you and is highly dependent on the configuration tool used.

In the fifth stage, “Deploy to Dev,” you configure the resource in the test/development/staging environment with the new configuration.

The next stage verifies that these configurations were applied correctly. You can, again, have a single stage that verifies both configuration and functionality, or you can use two separate stages. Figure 2-3 shows a single stage, “Testing,” but it’s recommended to use two.

Continuing with the previous example of configuring a new OSPF neighbor in a Nexus switch, in this stage, you would again retrieve the Nexus’s running-config section of OSPF and the configuration of the changed interface and make sure it has the newly configured parameters. On top of that, you would retrieve the same show commands and verify the command output shows the new neighbor along with new routes. In this stage, like in the provisioning use case, you can take your testing further and also test functionality (for example, a connectivity test to an endpoint behind the new OSPF neighbor). The depth of the testing should depend on the criticality of the change and your willingness to accept risk.

So far, all the previous stages were executed against a development environment. This is a major difference from the traditional workflow, where there’s a single environment—production. If everything functions as expected at the end of the verifications, you repeat the same stages but with a production resource as the target, represented by the “Deploy to Prod” stage.

Configuration workflows, just like the provisioning workflows, are typically triggered manually or by variable changes in a source control repository.

Data Collection

The simplest use case is data collection, where the goal is to gather data from resources. Although the target resources can differ (virtual machines, physical network equipment, controllers, and so on), the pipeline architecture is the same. Data collection is more often triggered on a schedule, like a cron job, than performed manually by an operator. However, these two are the usual triggers.

In a traditional scenario, operators can use different ways to retrieve data. In networking, a common technique is to connect to devices using SSH and retrieve show command outputs. Other data-gathering techniques include SNMP polling and using a device’s APIs.

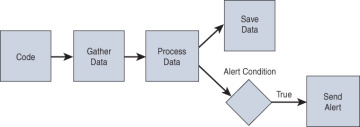

It is important to note that all of these techniques are being increasingly automated. Instead of operators manually connecting to every device and gathering the command outputs, they now run scripts from their workstations that do this for them. However, NetDevOps takes this further by adding consistency, history tracking, and easy integrations to the process. Figure 2-4 presents a data collection pipeline.

FIGURE 2.4 Data Collection Pipeline

It starts with the usual stage of retrieving your source code from a code repository; in this case, the most commonly used code is automation scripts, namely using Python. Python’s network modules, vibrant developer community, and its easy-to-learn features, such as human-friendly syntax, make it the most used tool for this purpose. Nonetheless, Ansible and other tools can also effectively retrieve data.

In the second stage, “Gather Data,” the scripts run and the results are stored locally. This step can be time-consuming if there are several target resources or if the scripts retrieve a large amount of data from each resource. In the first case, you can speed up the process by having multiple data-gathering stages running in parallel. You will learn more about this technique in Chapter 4.

The third stage, “Process Data,” is optional but highly recommended. Data gathered from devices comes in its raw form, meaning it comes in whatever format the device outputs it in. Most of the time, this format is not the one you need. In this stage, you can use a programming language to parse the collected data into useful insights.

For example, if you issue a show cdp neighbors command, you get something similar to Example 2-2. This output is very verbose, and you might only need the name and the remote and local interfaces of the device’s neighbor to store in a database. This is where a script could parse the output and save it in a simplified format.

Example 2-2 Output for show cdp neighbors on a Cisco Switch

The last stage, “Save Data,” is also optional. There are times when you are just collecting data for real-time verifications and you do not want to store what is collected; this is the equivalent of connecting to a network device by issuing a show command to verify something and immediately terminating that connection. However, if you do want to store what was gathered, you use this last stage. This stage is especially useful when you want to send the gathered data to multiple systems (for example, a monitoring system and a long-term archive). One goal for this last stage might be to anticipate network issues through the use of predictive machine learning (ML) models.

Compliance

There are many compliance frameworks and requirements. Most companies have to comply with regulatory requirements, such as HIPAA, PCI-DSS, SOX, DORA, and GDPR, on top of their own compliance policies.

In order to be compliant, companies have to prove their compliance. It is not enough to say they are applying the measures; in most cases, they have to prove the measures are actually in place. Historically, this was done through a series of manual steps executed by operators. Fast-forward to today, the process is a mix of manual and automated steps, mostly via manually operated automation tools. For example, if you must prove your organization is using SSH version 2 for all its network devices access instead of the older (and less secure) version 1, you must connect to every device in the network and retrieve and verify its configuration to show that.

As previously mentioned for the data collection use case, connecting to devices today is commonly achieved by an operator running a script on their machine rather than actually connecting and issuing a show command. However, for compliance, you want something more reliable for recordkeeping than a human operator. A NetDevOps pipeline, as shown in Figure 2-5, adds these benefits.

FIGURE 2.5 Compliance Verification Pipeline

The second stage, “Gather Data,” and third stage, “Verify Compliance,” can be implemented in a single stage if your automation script does both of those tasks together. Beyond just gathering data, you also need to parse it into a format you can use for the verifications. This should all be achieved by your automation scripts.

The fourth stage, “Apply Remediations,” is optional. However, it is a nice way of maintaining your environment’s compliance. It is hard to achieve in a general-purpose way; nonetheless, for common attributes that tend to fall out of compliance, you can develop a script to fix them. For example, in networking, you can forget to enable BPDU Guard on newly configured access ports. After the automation identifies these ports as noncompliant, your remediation script adds this configuration to the affected ports. Another example is forgetting to enable password encryption. This can be achieved by triggering a configuration pipeline.

Lastly, the “Generate Reports” stage aggregates all information into a report format. Compliance officers typically want a document with a set structure. This can be a single report or multiple reports. There are many ways to generate these reports, but the most common is to use a programing language to produce markdown documents that can also be tracked under version control systems. If you do not need to generate a specific report, the logs generated by the pipeline run itself can be enough to document compliance, and you could then delete this last stage.

Compliance verifications are typically only done during certain specific time periods (for example, when you are trying to achieve a compliance certification or at a certain time to comply with regulations). The automation capabilities discussed in this section allow for constant compliance verification, rather than the usual point-in-time verifications. Because these pipelines are automated and can run without effort, you are able to achieve a constant monitoring of your compliance status by triggering them on a scheduled basis. A benefit of this behavior is the ability to take faster remediation actions whenever noncompliant characteristics are detected. For point-in-time verifications, you can still trigger them in a manual fashion.

Monitoring and Alerting

Monitoring is observing something over time. In our context, it can be observing metrics, logs, or simply the configuration of our resources. This is not a new field; there are plenty of well-established monitoring techniques and tools out there.

Alerting is warning something or someone of a certain condition. In networking, this is typically associated with a numerical threshold or a specific error message. Monitoring and alerting are two sides of the same coin: without monitoring resources, you cannot accurately trigger alerts. Although you can monitor resources without creating alerts, you lose value. Imagine you are monitoring the CPU percentage of your network devices, and the monitoring solution identifies a specific router at 100% CPU usage. If you don’t have any alert on this condition, monitoring it does not add much value other than being able to know, at a later time, that the condition happened. On the other hand, if you do have an alert set, you can notify someone to act on this condition and mitigate the issue (likewise, you can notify something that triggers an automatic action, such as running a script).

As mentioned, monitoring and alerting are an established field. Because of that, you will most likely use a commercial off-the-shelf (COTS) tool. They are easy to install, configure, and use. These tools can be split into two categories: full end-to-end tools that manage the consumption, processing, and visualization of data, such as Cisco DNA Center Assurance and Datadog Network Monitoring, and tools that only manage processing and visualization of data, leaving you the responsibility of taking care of data ingestion, such as Splunk and the ELK (Elasticsearch, Logstash, Kibana) stack.

In the first use case, NetDevOps does not really have a role to play. However, in the second use case, where you own the data ingestion, a pipeline such as the one previously shown for data collection is useful. In the last stage you send the data, in the correct format, to the monitoring tools rather than a historical database. Another architecture sends the data to the database and configures the monitoring tool to monitor the data in the database, although this is less common. Alerting in these use cases is done in the monitoring tool itself, which is a widely supported functionally of these tools.

Besides the previously mentioned scenarios, there are two more cases: one where you decide to build your own monitoring and alerting solution, and one where you do not build a solution but use NetDevOps pipelines to achieve a simple alerting flow.

In the case where you decide to build your own tool, think again—this is not an easy task. In the case where you just want a simple alerting mechanism but don’t want to invest in any tool at all, you can use a pipeline like the one in Figure 2-6.

FIGURE 2.6 Monitoring and Alerting Pipeline

You can see the resemblance to the data collection pipeline, because monitoring is data collection over time. Therefore, you would trigger this pipeline on a scheduled basis.

At the end of the pipeline, you can see that when you send the processed data to storage, you also verify whether it passes a certain threshold or other configured alarm condition; if it does, you trigger an alarm. This does not need to be done in parallel, but doing it in parallel can trigger the alarm quicker.

Because the word “alarm” is used, you may think this needs to be a notification of some sort. However, this is not true. An alarm in this sense is just an action; it can be a notification such as an email or SMS, but it can also be an action such as calling an API or triggering another pipeline (for example, a remediation pipeline).

In a networking scenario, imagine you are monitoring the log file of a Layer 2 switch and you have configured an alert for the following error message format:

2022 Jul 14 16:04:23.881 N9K %L2FM-4-L2FM_MAC_MOVE2: Mac 0000.117d. e03f in vlan 71 has moved between Po5 to Eth1/3

When your monitoring pipeline detects a MAC address moving between two different ports, which is sometimes a sign that an L2 loop is present on the network, it triggers a remediation pipeline that shuts down one of the two ports involved. On top of this, you could also notify someone that this action was taken, meaning there is no limit to the number of actions an alarm triggers.

Reporting

Creating reports is an activity many do not enjoy. However, they are needed for all sorts of reasons. Common reports in networking include hardware platforms, software versions, configuration best practices, and security vulnerabilities.

Each report has its own requirements and format. Drilling down on software version reports or software install base status reports typically requires three steps:

Step 1. Connect to a device and gather the software version.

Step 2. Verify the current version against recommended vendor version.

Step 3. Repeat Steps 1 and 2 for every device and then generate a report.

Like many of the previous tasks, most companies today have part of the process (at least Steps 1 and 2) automated, but they run these automated processes/scripts manually from their workstations. Nonetheless, for Step 2, the operator needs to obtain, beforehand, the recommended version for comparison. In an ideal scenario, the automation could fetch the recommended version at the time of verification. For this to be possible, this information needs to be available from the vendor on a website or in an API, which is typically the case.

It is worth noting the higher the number of different operators running the scripts (for example, operators in different geographies or branches), the higher the likelihood of human error.

Other report types, independent of their nature, share the same sequence of steps to generate:

Step 1. Gather data.

Step 2. Verify the data against rules.

Step 3. Generate a report.

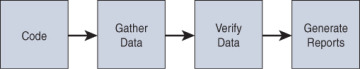

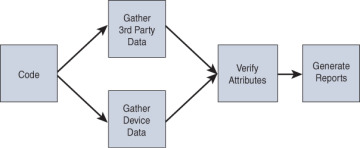

A NetDevOps reporting pipeline architecture is presented in Figure 2-7.

FIGURE 2.7 Reporting Pipeline

This is similar to a compliance pipeline, but in this case, we do not want to apply remediations, at least not at this stage, because the goal is just visibility.

As previously mentioned, some reports require data from outside of your environment to be generated. In the software versions example, the required data is the recommended vendor version, and in the case of security vulnerabilities you need a matrix of vulnerabilities per software version. This information gathering can and should be implemented as a separate stage. You can implement it in parallel with the data gathering stage from your environment, as shown in Figure 2-8, or before that stage, right after checking out your code from the code repository, as shown in Figure 2-9.

FIGURE 2.8 Reporting Pipeline Integrated with a Third Party in Parallel

FIGURE 2.9 Reporting Pipeline Integrated with a Third Party Sequentially

Having it before instead of in parallel has the advantage of saving compute resources in the case that the needed information is not available. This means that your pipeline will fail without trying to gather information from your network because the verification data was not available.

Also, the previous examples show a capability offered by some proprietary network controllers such as Cisco DNA Center. A major advantage of this approach is reusability. You are able to create reports for anything you need using the same pipeline structure and automation scripts, with only minor modifications. You may have reporting needs beyond the typical ones; for example, you might have to report how many of your devices are running at or below 50% CPU usage or which of your devices are running out of physical memory. For these custom reporting needs, the proprietary controllers fall short.

Migrations

Network migration is a complex task that typically entails changing something to something different. Tasks can range from changing network configurations, provisioning new software, to replacing hardware. Nonetheless, it is important for you to understand that migration procedures are a combination of many smaller and simpler tasks.

Because of the criticality of some networks and the downtime some migrations cause, these are usually performed within maintenance windows. A maintenance window is a period of time, scheduled in advance, during which changes are made and service interruptions can happen. This concept is wider than networking or IT and is used in multiple other industries such as manufacturing and retail.

In networking, migrations are typically associated with a method of procedure (MoP) document. Although it can have various names, this document details the migration steps one by one. A network device hardware replacement migration, for example, typically consists of the following steps:

Step 1. Gather configuration data from the current device.

Step 2. Prepare configuration for the new device.

Step 3. Gather operational data from the current device.

Step 4. Configure the new device.

Step 5. Replace the current device with the newly configured device.

Step 6. Gather operational data from the new device.

Step 7. Verify operation data changes.

This is just an example; the actual steps may differ, depending on the migration use case. In a scenario where you do not have extra rack space, you have to switch the order of Steps 4 and 5. In a scenario where you are replacing the physical hardware but no configuration changes are required, Step 2 is not needed, as you can simply use the configuration gathered in Step 1. All of this is to show that each migration is different, so take this into account.

Independent of the migration scenario, most of the steps can be automated. However, the physical moving of equipment and cables cannot, or at least requires a different type of automation not covered by this book. Nonetheless, data collection, device configuration, and virtual device provisioning can be automated, as shown in previous sections. Many network migrations do not involve physical activities; therefore, those can be fully automated.

Today’s state-of-art migrations, as with many of the previous use cases, rely on automating these steps individually and rely on an operator to execute those automation steps. NetDevOps ties all the automation steps together while enhancing the overall experience.

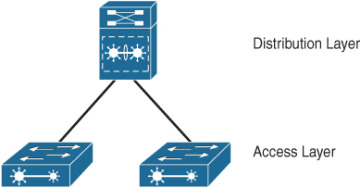

Figure 2-10 shows a simple two-tier network topology composed of a single distribution switch and two access switches. The migration consists of replacing the distribution switch with a newer one.

FIGURE 2.10 Two-Tier Network Topology

To replace this distribution switch, we rack and stack a new distribution switch and connect it to the available ports on our access switches. On top of that, we configure this new switch with out-of-band (OOB) management to enable remote management access. An example pipeline for the migration is shown in Figure 2-11.

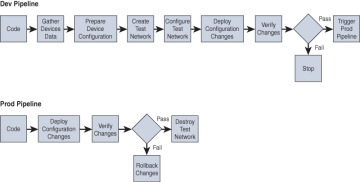

FIGURE 2.11 Migration Pipeline

This migration pipeline is divided into two pipelines: Dev and Prod. You can achieve the same functionality in a single pipeline, but the goal is to demonstrate the capability of a pipeline calling another pipeline.

You can see similarities between the migration pipeline and the configuration and data collection pipelines because this migration scenario is based on configuring a new device (configuration) using information from an already existing device (data collection).

In this scenario, you also see the return of the test/development/staging network. As mentioned, migrations tend to be critical, and using a test network to verify your changes before deploying them in production is a way to mitigate risk. In this particular example, you create a new test network to test the changes; this would be a virtual test network, as you will see in Chapter 5, “How to Implement Virtual Networks with EVE-NG.” There are other possibilities to test your changes. For example, if you already have a test network, you could modify the pipeline to configure your test network the same way as your production environment, replacing the stage “Create Test Network,” and then deploy and verify the changes there. In this case, the test network could be physical.

The provisioning use case briefly mentioned rollbacks. However, for migrations, rollbacks are a critical piece. As part of the MoP, network engineers prepare rollback configurations and actions in case the migration does not go as expected. This is fairly common. You see a rollback stage associated with a decision point in the pipeline in Figure 2-11; NetDevOps facilitates rollbacks in comparison to how they normally go. If you have been involved in migrations, you know that rolling back is almost always a high-pressure situation. If you are rolling back, things are already not going your way. On top of that, to roll back, you will need to make even more changes. When time is an important factor (for example, in very short service cut migrations), the pressure to roll back quickly is huge. This is a very error-prone activity. In the pipeline scenario, the rollback is prepared in advance without pressure. The rollback configurations can be tested beforehand, and you can be sure that the automation will not make any copy/paste errors.

For migration pipelines, the only trigger is manual. You could use a different type (for example, a scheduled trigger), but migrations are typically such high-risk activities that they require human supervision, even when they are being executed by NetDevOps pipelines.

Troubleshooting

Some engineers love troubleshooting—the feeling of chasing and solving an unexpected behavior—but many others hate it. Troubleshooting is both a skill and an art, many times fueled by the rush of needing to fix something quickly because the issue is affecting a production service. Indeed, troubleshooting is often required in the worst moments. Do you remember the last time you had to troubleshoot something? Was it a calm situation in a development environment, or was it a production outage?

Troubleshooting often requires deep technical knowledge that not everyone in the company has. For example, if you are responsible for a production service that has a pager associated with it, do you let a newcomer, even if they are an expert on the technology, take part in troubleshooting the service. At least not initially, until the newcomer is fully onboarded and knowledgeable about the intricacies of the service. This shows that troubleshooting not only requires technical knowledge but often also subject matter expertise in the specific service itself.

Independent of all these challenges, a troubleshooting workflow is quite well-defined and includes the following steps:

Step 1. Gather data from the affected resources.

Step 2. Analyze the collected data and formulate hypotheses.

Step 3. Experiment with the most likely hypothesis by configuring or reconfiguring resources.

Step 4. Test for success.

Step 5. Repeat Steps 3 and 4 until you’re successful.

Not in every scenario can you apply Steps 3 and 4 multiple times and experiment with several hypotheses. In some scenarios, you need to analyze the data until you are certain of the problem and the solution. Nonetheless, after you are certain of these, you still apply Step 3. It is notoriously difficult to be 100% certain, and even if you are, you still need to apply the solution and verify the success.

In a networking-specific scenario, the aforementioned troubleshooting workflow is very similar:

Step 1. Connect to the possible affected network devices.

Step 2. Collect show command outputs.

Step 3. Analyze the collected outputs and formulate hypotheses.

Step 3a. (Optional but common) Repeat Steps 2 and 3 until you arrive at reasonable hypotheses.

Step 4. Reconfigure/configure any identified missing feature in the network devices.

Step 5. Test for success.

Step 6. Repeat Steps 3 and 4 until you are successful.

Sounds pretty simple, right? Well, it is not. A network problem can manifest itself, and usually does, with pretty generic symptoms, such as loss of connectivity for some endpoints, increased latency, or users complaining their access is “slow.” From such a generic description it’s typically hard to pinpoint the specific network devices affected; therefore, your first step has a large number of target devices. On top of that, after connecting to your devices, assuming they are the correct ones, what show commands do you run? A common technique on Cisco devices is to start with the generic ones, such as show logging, as shown in Example 2-3, or show running-config. Other vendor devices have similar commands with different syntax.

Example 2-3 Output for show logging on a Cisco Switch

Step 3 is highly correlated to Steps 1 and 2. You will formulate hypotheses based on your findings. And most of the time, you get stuck on Step 3a, bouncing between Steps 2 and 3 before you make any type of configuration change.

You make it to Step 4, however, and after you make your change, your users are still affected by their initial condition. Step 5 is a failure, but there is another thing you need to look out for: Did the change you make break something else? Maybe something else completely unrelated to the problem you were investigating? These are hard questions to answer in a traditional network setup, but you already have the solution: NetDevOps.

Troubleshooting is a collection of smaller use cases: data collection, configuration, provisioning, monitoring, and so on. What you have learned so far applies to this use case. Instead of manually connecting to devices and collecting show command outputs (Steps 1 and 2), you can run parameterized data collection pipelines that target the intended devices. Likewise, after formulating your hypothesis, you can codify those changes into a source control repository and run a configuration or a provisioning pipeline, depending on the troubleshooting scenario, and execute the changes with a higher degree of confidence. On top of that, the success criteria testing could be baked into the pipeline with an automatic rollback stage, as described previously.

When testing for your success criteria, be aware of the time it can take to propagate a change; likewise, when you are doing a manual change, you might issue a show command multiple times before it shows that the changed output using automation is the same. Another example is when you reboot a device: It takes time for the device to come up again and accept connections, and you often find yourself repeating the ssh command multiple times, or you have a ping running to the device and only reconnect when the ping is successful. In an orchestration scenario, take this into consideration. Add a wait time stage if you know the target device will not be immediately available. On top of that, you have a retry configurable option at the stage level in most CI/CD tools, which you can use in your verify success criteria stage.

All these changes combined, or even a subset of them, will not make you love troubleshooting if you hate it, but it can make troubleshooting easier, more reliable, and reduce mean time to repair (MTTR).

There are other applications of NetDevOps in troubleshooting. It can abstract the troubleshooting activity as a whole, disguised as a pipeline. This is not as simple to build as the previous example where you replaced the individual troubleshooting workflow steps with automated activities, but the reward is higher.

Figure 2-12 shows you a troubleshooting pipeline that is triggered automatically by a monitoring system when an alarm is triggered. The secret sauce of this pipeline lies in the step of automated machine reasoning. Automated reasoning is an area of computer science that is concerned with applying reasoning in the form of logic to computing systems. If given a set of assumptions and a goal, an automated reasoning system should be able to make logical inferences toward that goal automatically. In our context and put simply, it is a system that tries to understand what is happening in our network and infer potential solutions.

FIGURE 2.12 Troubleshooting Pipeline

How you build your own automated reasoning system is well beyond the scope of this book; however, you can partly accomplish this by using a rule-based system.

Imagine the following scenario: You manage a network that commonly suffers from L2 loops. You do not run the Spanning Tree Protocol because of fast convergence requirements, and sometimes your engineering team forgets that and creates looped topologies. In this scenario, you can benefit from having an automated rule-based engine that troubleshoots this issue for you. A subject matter expert (SME) would typically connect to the affected devices, identify interfaces with high utilization and possible packet drops, identify MAC address flaps either using the switch’s log or the MAC address table, and then break the loop. However, if you are not a seasoned SME, you might lose time collecting other show command outputs and at the end create a hypothesis regarding problems other than the L2 loop.

With an “auto-troubleshooting” pipeline, you can abstract what is being collected and analyzed from the devices and output to the network operator only what it thinks the underlying issue is. Of course, it is also possible that this pipeline applies the fix directly, but most of the time in networking use cases, companies want human confirmation.

This works great for common issues such as BPDU Guard–blocked doors and mismatched protocol timers. However, for complex troubleshooting scenarios, you will need a very good rule-based system, which is not easy to create.

Although the previous example was triggered automatically from a monitoring system alarm, you can create “auto-troubleshooting” pipelines for common issues of your network and let operators trigger these manually. This is a good first step, and it also reduces the subject matter knowledge required to troubleshoot common issues. If this does not solve the issue, it can be escalated to a higher-tier, smaller team.

Combined

The two previous use cases, migrations and troubleshooting, are combined use cases. They aggregate the previous simpler use cases into more complex end goals.

In networking, complex goals are often what you will encounter. Nonetheless, these complex goals and tasks can be decomposed into smaller, more manageable subgoals and subtasks, and that is what you will learn about in this section.

An interesting combined use case is the use of one pipeline to gather data and store it in a database and the use of other pipelines in the same network for retrieving that data from the database instead of fetching it from the devices directly. A database in this context can be anything, even a local file. This type of interaction between pipelines will reduce resource consumption on end devices because you are not connecting to them for every action. Although all the use cases in this chapter so far have gathered data from the end devices, you can now see this is not necessary.

Another interesting combined use case is network optimization. While your data collection pipelines are collecting data from your network and storing it somewhere (for example, in a database), you can have a pipeline monitoring the stored data, looking for patterns or optimizations. For example, if you are collecting and storing information on the bandwidth utilization of your interfaces, it is possible that your monitoring pipeline will identify underutilized interfaces. In this case, it can trigger a configuration pipeline that alters some traffic-routing metrics to reroute traffic and better utilize your available infrastructure.

There are increasingly more uses for networking data. A practice that is becoming more common is to apply machine learning algorithms to identify patterns in networking data. Just like in the previous scenario, assume that you are collecting and storing your switches’ data—this time percentage CPU utilization. You can build a machine learning model, which some consider to fall within the automation umbrella, and integrate it in a NetDevOps pipeline.

In simple terms, a machine learning model has two phases in its lifecycle: the first phase is where it needs to be trained, and the second phase is where you can use it to make predictions (called “inference”). In the first phase, you “feed” data to the model so it can learn the patterns of your data. In the second phase, you give it a new data point, and the model returns a prediction based on what it learned from past observations.

Continuing our previous example, you can train a model based on percentage CPU utilization per device family. CPU utilization is highly irregular—what is an acceptable value for a specific device doing a specific network function might not be an acceptable value for a different device in the same network. Because of this, it is very complicated to set manual thresholds. Machine learning can help you set adaptable thresholds depending on the specific device based on its historical CPU utilization.

Now what does all of this has to do with NetDevOps? You can have a pipeline that retrains a model when predictions become stale. Likewise, you can call the inference point of the model in one of your alerting pipelines and replace the static alarm thresholds.

Machine learning is starting to have many applications in the networking world—from hardware failure prediction to dynamic thresholds and predictive routing. It is important to understand that if you are using NetDevOps practices, adding machine learning into the mix is simple.

Do you have a use case not covered in this chapter? NetDevOps is only limited by what you can do with automation. So, if you can automate it, you can make it run on a CI/CD pipeline using source control and testing techniques to reap all the benefits you have learned in this chapter.