Measuring Time Error

This chapter explores several definitions and limits of time error, and numerous metrics to quantify it, as well as examining the impact of time error on applications. In summary, time error, essentially the difference between a slave clock and a reference or master clock, is primarily measured using four metrics: cTE, dTE, max|TE|, and TIE. From those basic measurements, engineers have statistically derived MTIE and TDEV from TIE, in combination with the application of different filters.

ITU-T recommendations specify the allowed limits for these different metrics of time error. The metrics cTE and max|TE| are defined in terms of time (such as nanoseconds). dTE, being the change of the time error function over time, is characterized using TIE and is then compared to a mask expressed in MTIE and TDEV, which defines the permissible limits for that type of clock.

Some ITU-T recommendations define allowed limits for a single standalone clock (a single network element), and others specify clock performance at the final output of a timing chain in an end-to-end network. You should not mix these two types of specifications, because limits that apply to the standalone case do not apply to the network case. Similarly, masks used to define the limits on a standalone clock do not apply to the network. This is especially the case when discussing the testing of timing performance, as in Chapter 12, “Operating and Verifying Timing Solutions.”

For each type and class of standalone clock, all four metrics, max|TE|, cTE, dTE, and TIE, are specified by these standards, while for the network case, max|TE| and dTE are specified. The cTE is not listed for network limits because the limits of max|TE| for the network case automatically include cTE, which might be added by any one clock in the chain of clocks.

The ITU-T specifies the various noise and/or time error constraints for almost all the clock types. For each one of the numerous clock specifications, the ITU-T recommendations define all five aspects for timing characterization of a clock:

Noise generation

Noise tolerance

Noise transfer

Transient response

Holdover performance

Chapter 8 will cover these specifications in more detail.

Topology

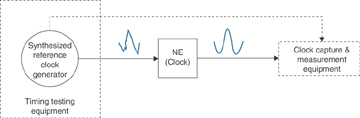

As shown in Figure 5-23, the setup required for time error measurement testing includes

Timing testing equipment that can synthesize ideal reference clock signals.

Clock under test that would normally be embedded in a network element.

Clock capture and measurement equipment. Most often this is the same equipment that generates the reference clock so that it can do an easy comparison to the output signal returned from the clock under test.

Note that if the tester is a different piece of equipment from the reference input clock, the same clock signal needs to be also passed to the tester to allow it to compare the measured clock to the reference clock. You cannot use two separate signals, one as reference and one in the tester.

Figure 5-23 Time Error Measurement Setup

The testing equipment that is generating the reference clock is referred to as a synthesized reference clock in Figure 5-23 because of the following:

For noise transfer tests, the reference clock needs to be synthesized such that jitter and wander is introduced (under software control). The tester generates the correct amount of jitter and wander, according to ITU-T recommendations, for the types of clock under test. The clock under test is required to filter out the introduced input noise and produce an output signal that is under the permissible limits from the standards. This limit is defined as a set of MTIE and TDEV masks.

For noise tolerance tests, the reference clock needs to emulate the input noise that the clock under test could experience in real-world deployments. And for this purpose, the testing equipment simulates a range of predefined noise or time errors in the reference signal to the clock under test. To test the maximum noise that can be tolerated by the clock as input, the engineer tests that the clock does not generate any alarms; does not switch to another reference; or does not go into holdover mode.

For noise generation tests, the reference clock that is fed to the clock under test should be ideal, such as one sourced from a PRTC. So, for this measurement, there is no need for artificially synthesizing the reference clock, unlike the noise transfer and noise tolerance case, where the clock is synthesized. The testing equipment then simply compares the reference input signal to the noisy signal from the clock under test.